“RAG platform” is the new buzzword everyone throws around like it’s interchangeable. It isn’t. The moment you try to deploy one inside a real company—with LDAP, SSO, messy PDFs, and external workflows—you realize quickly that most tools are optimized for demos, not infrastructure.

AnythingLLM and Dify are two of the more serious contenders in the self-hosted space. Both promise control, privacy, and extensibility. Both technically deliver.

But they diverge in ways that matter once you move beyond “upload a document and ask a question.”

This is not a surface-level comparison. This is what happens when you actually try to run them against enterprise data.

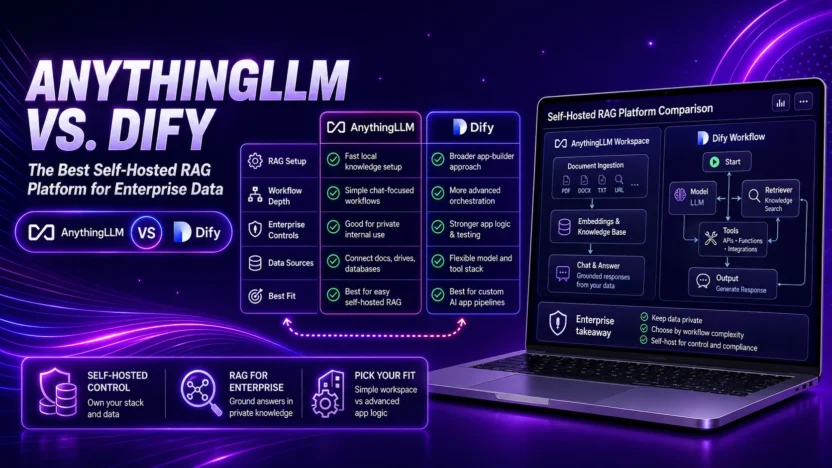

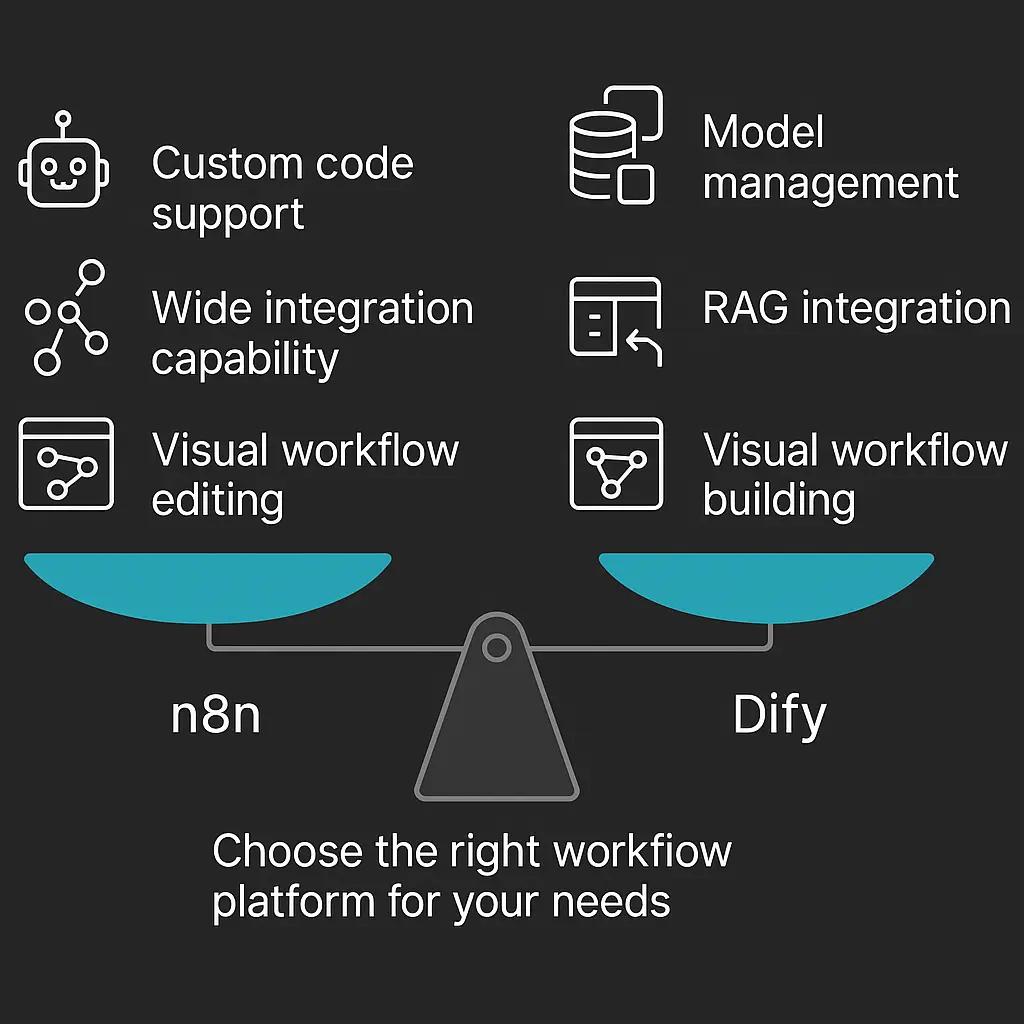

Architecture Philosophy: Controlled Workspace vs. Orchestrated AI Platform

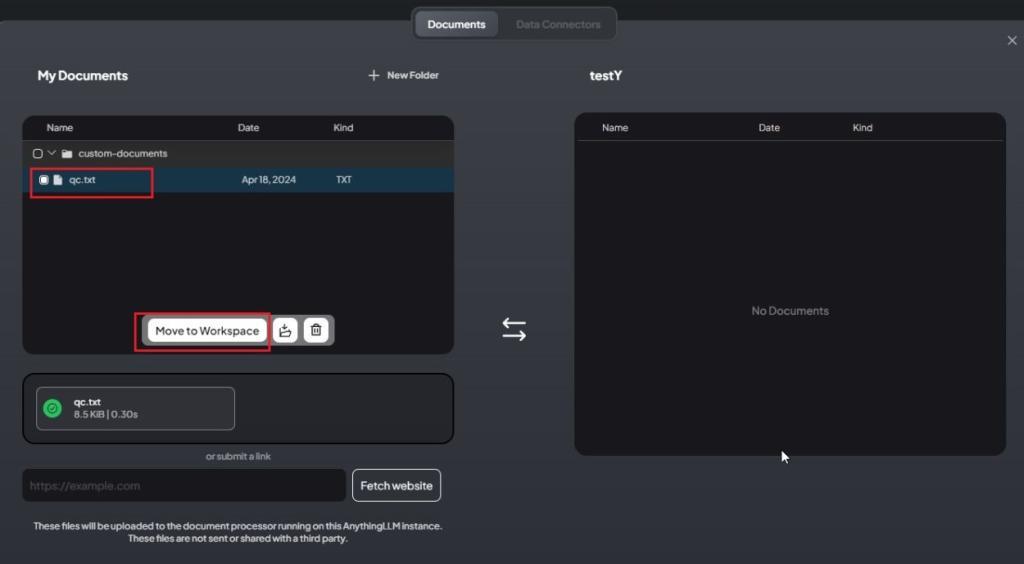

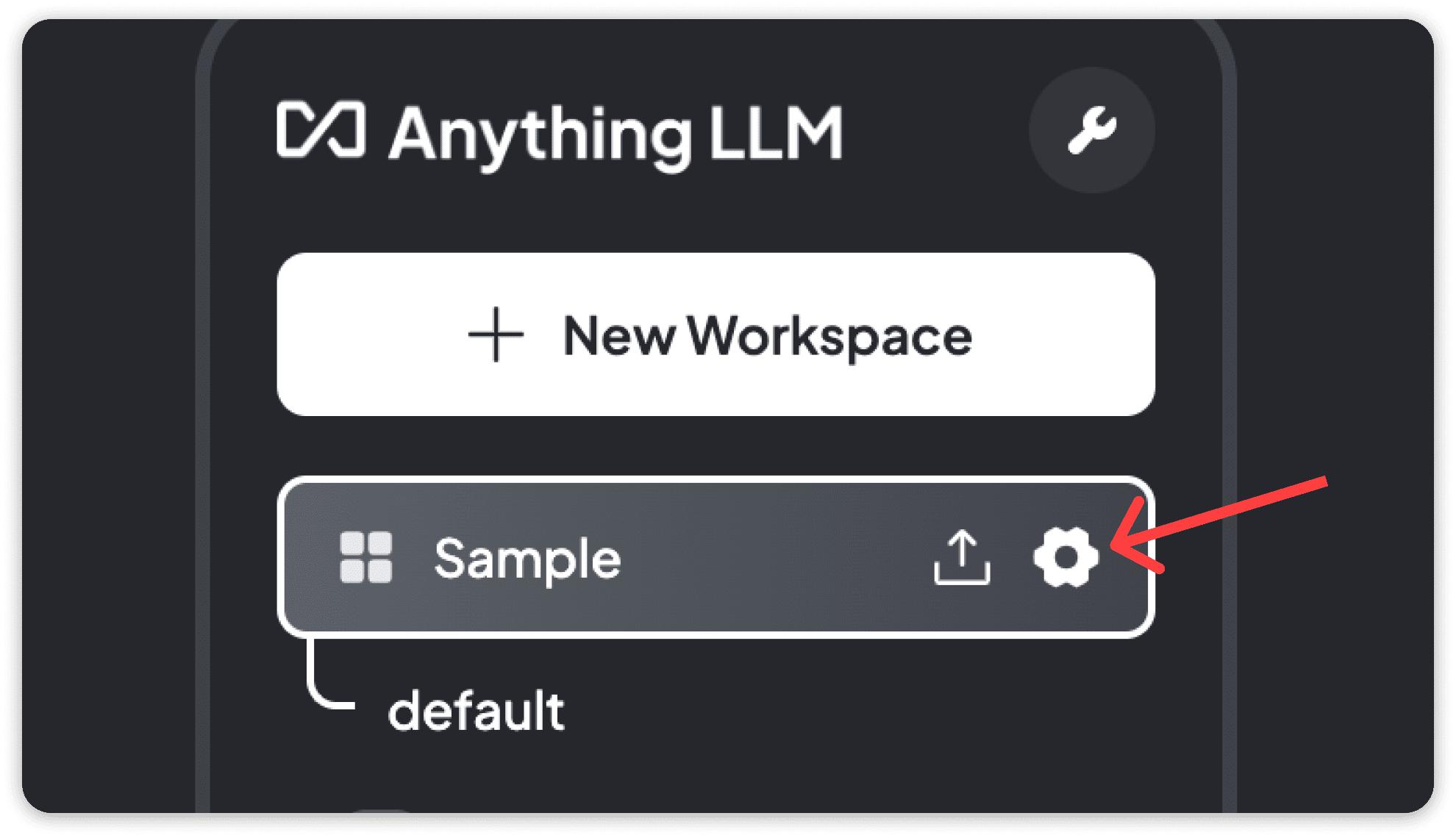

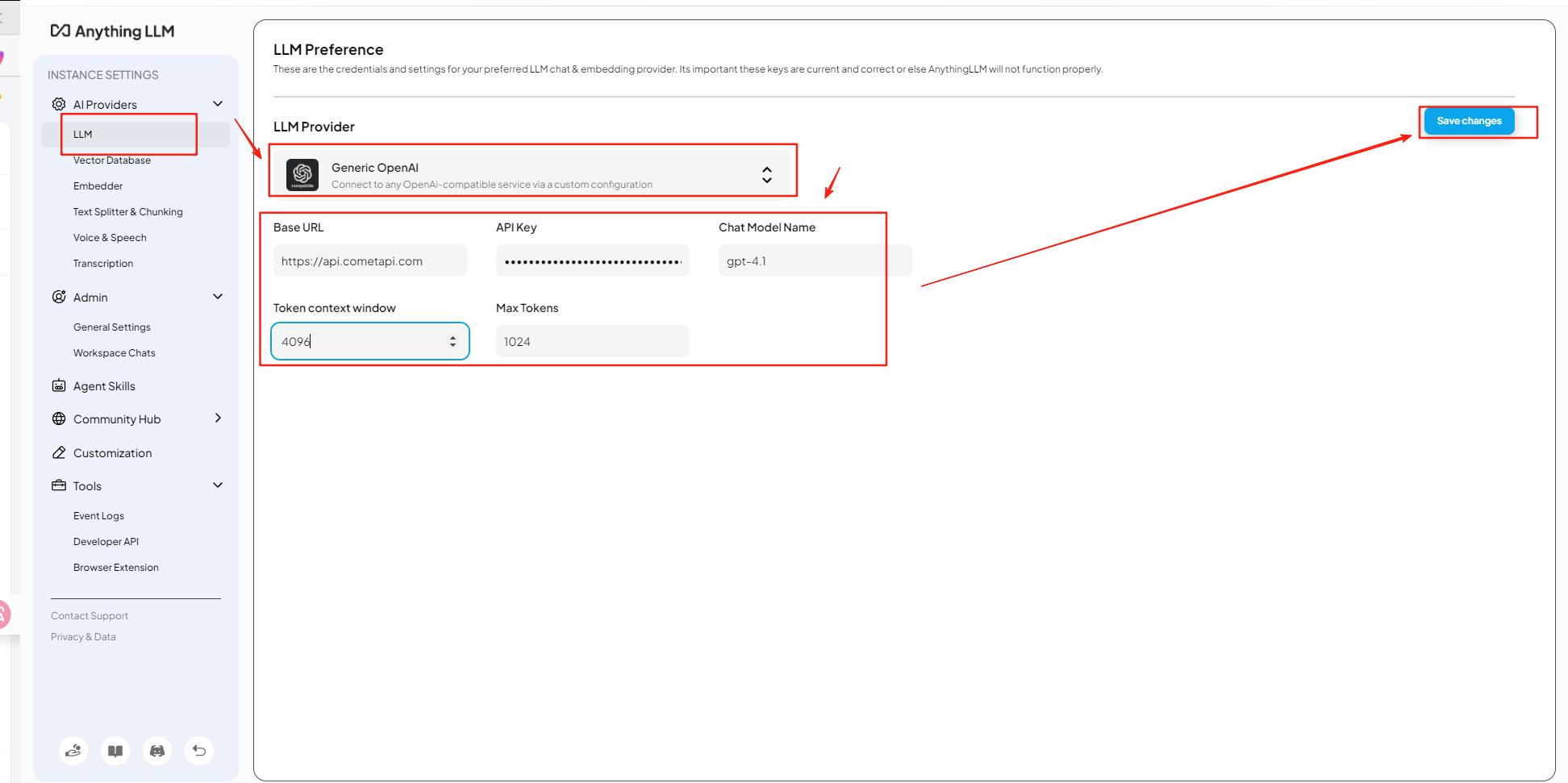

What the screenshot shows:

AnythingLLM’s workspace-based interface, where documents are attached to a workspace and queries are scoped within that context. The model interacts directly with indexed data tied to that workspace.

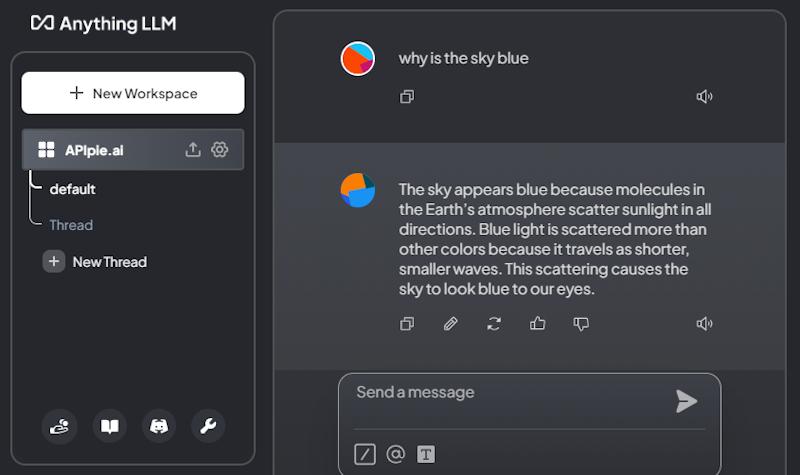

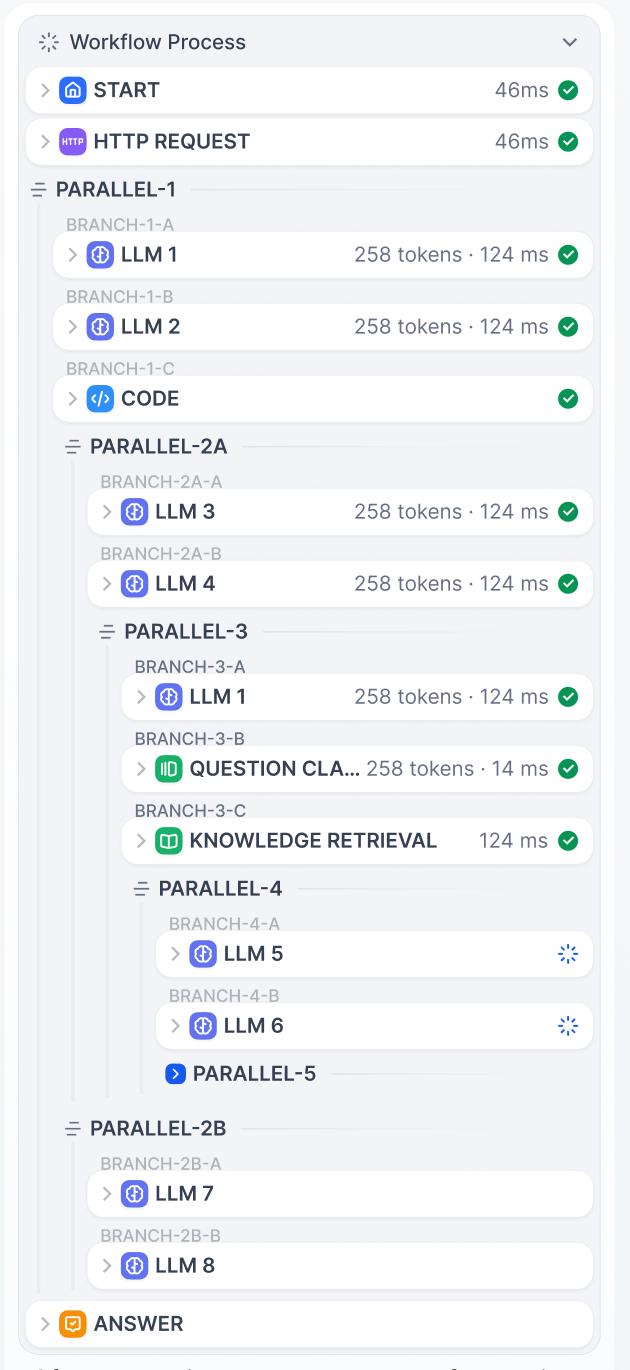

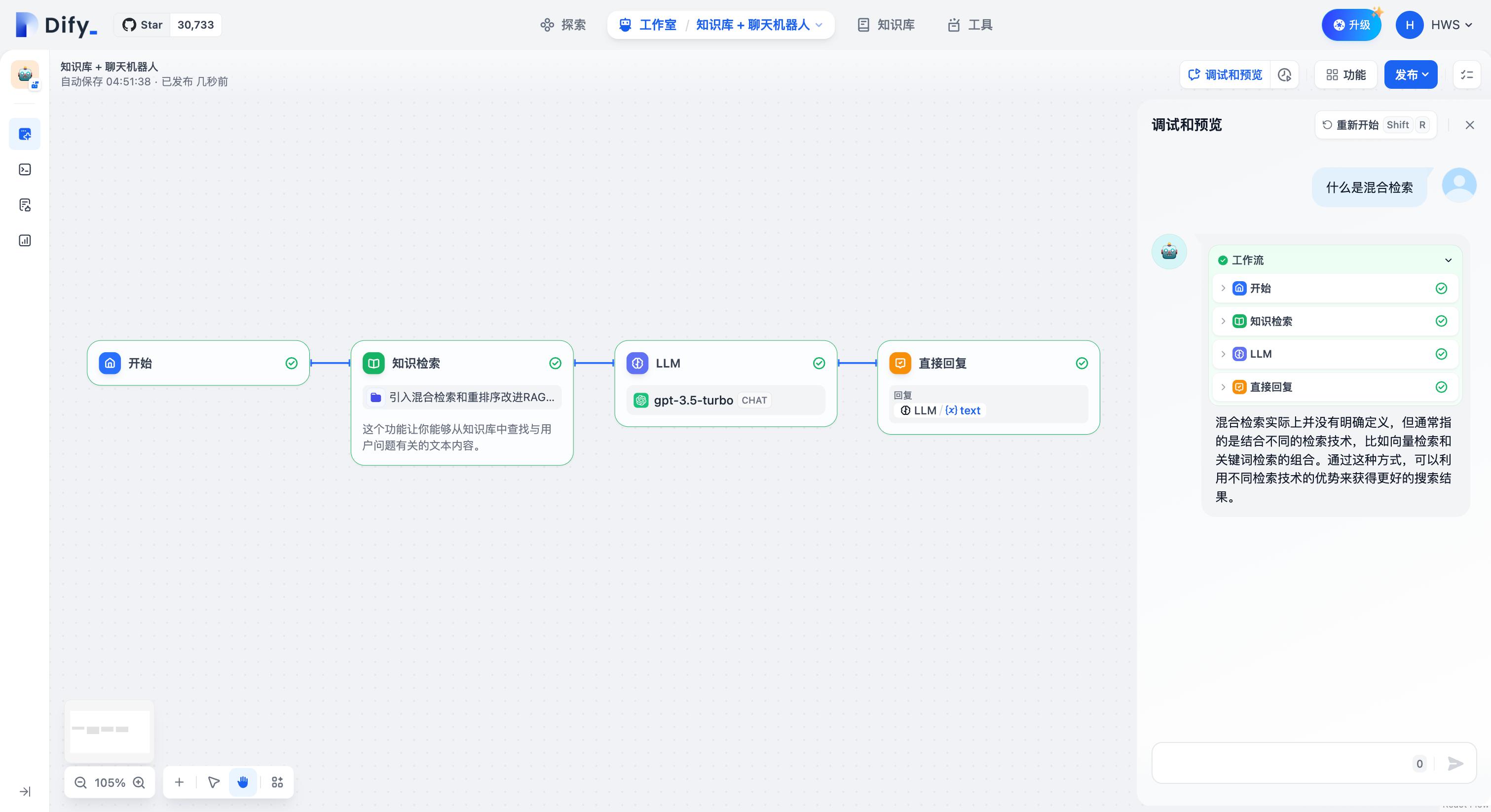

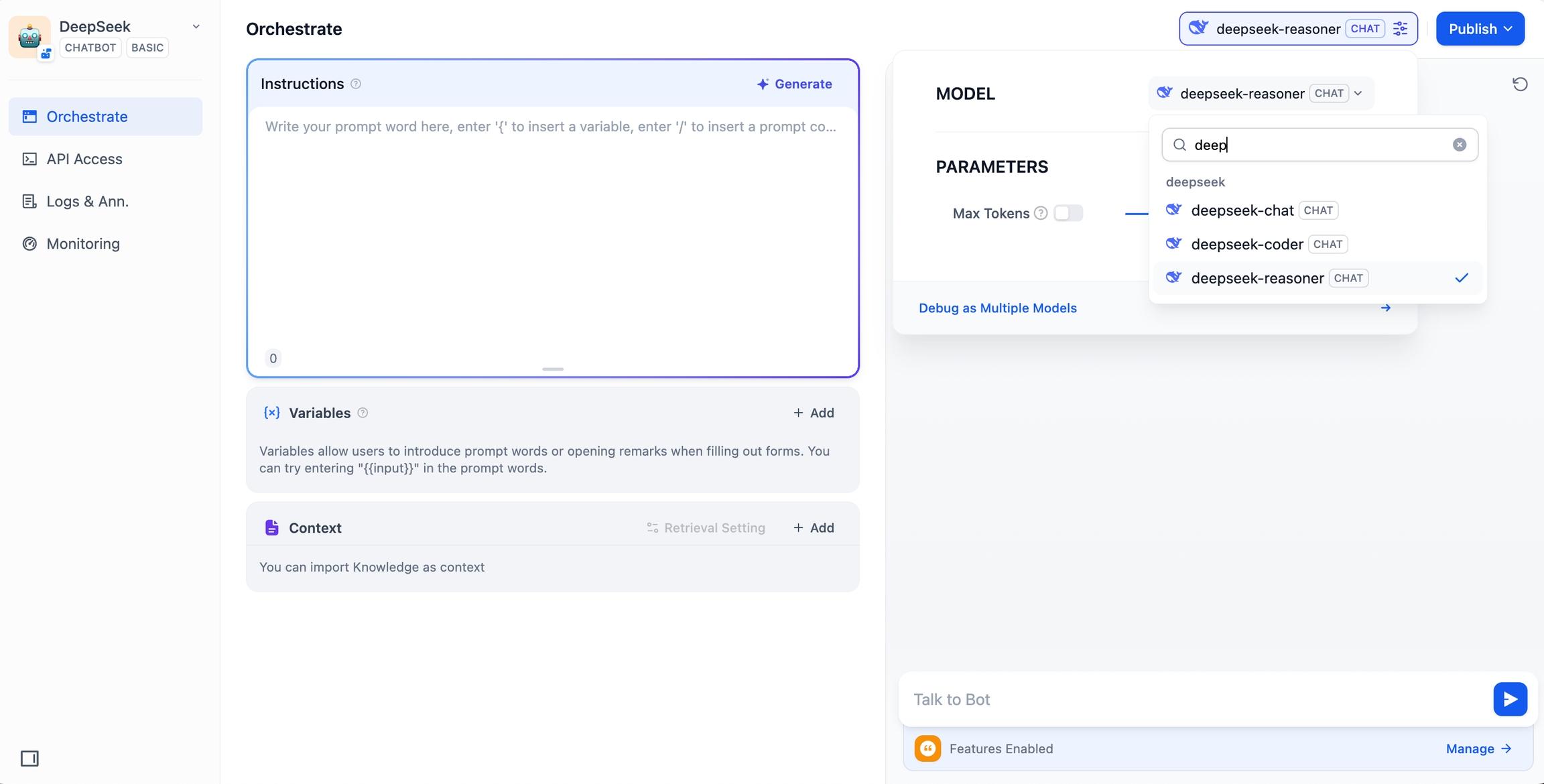

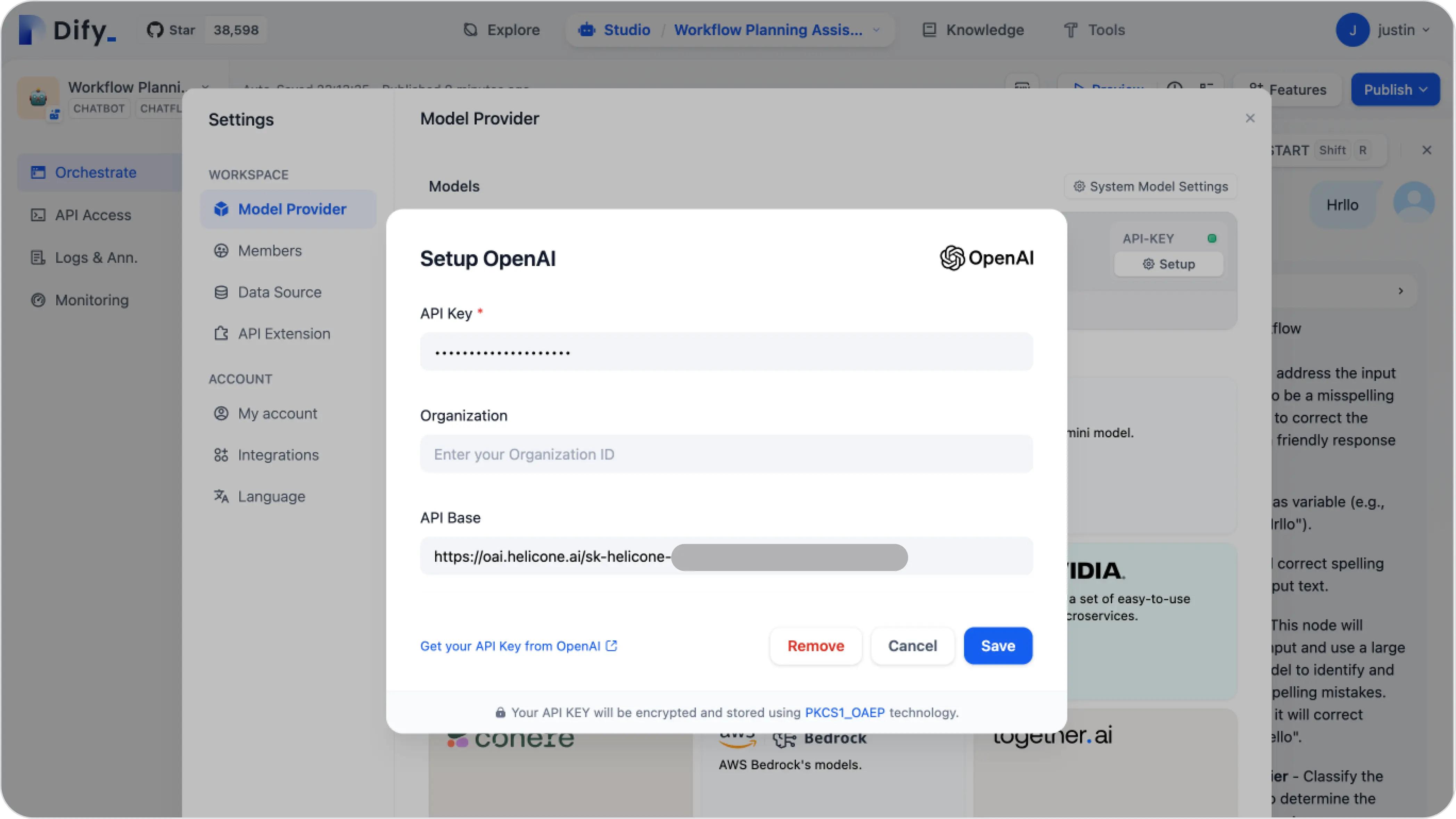

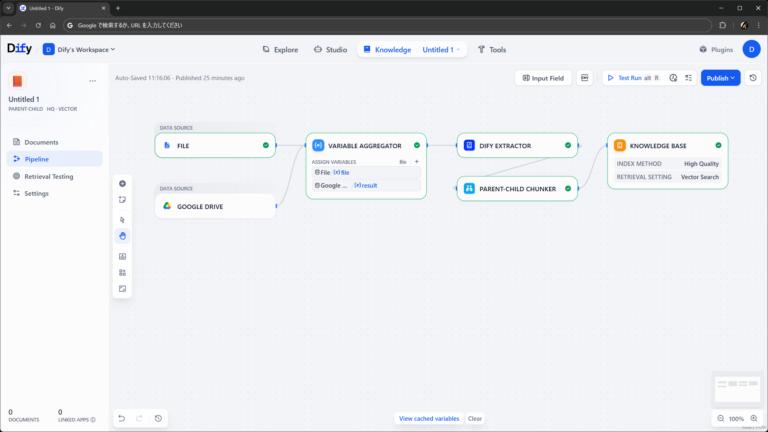

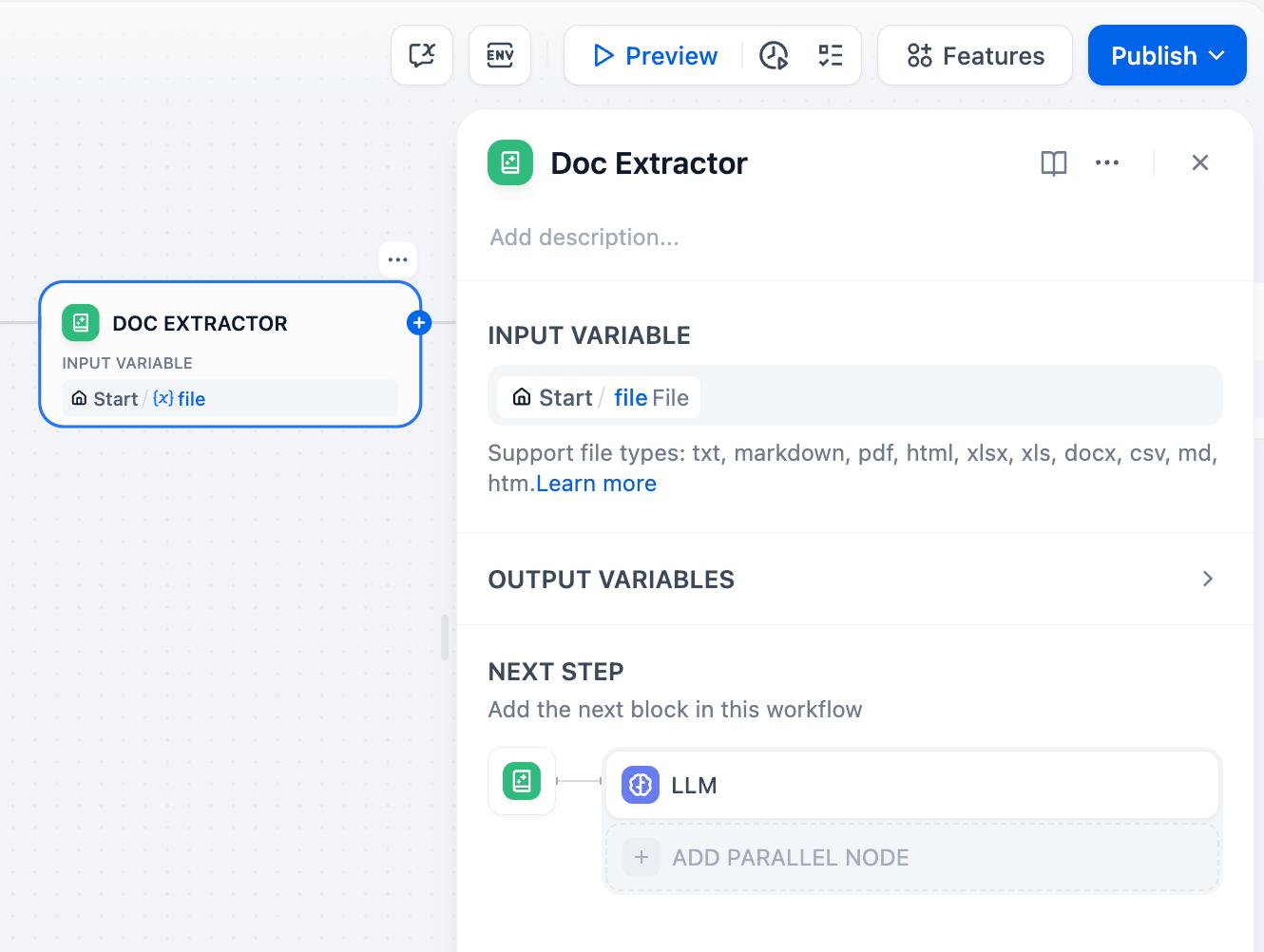

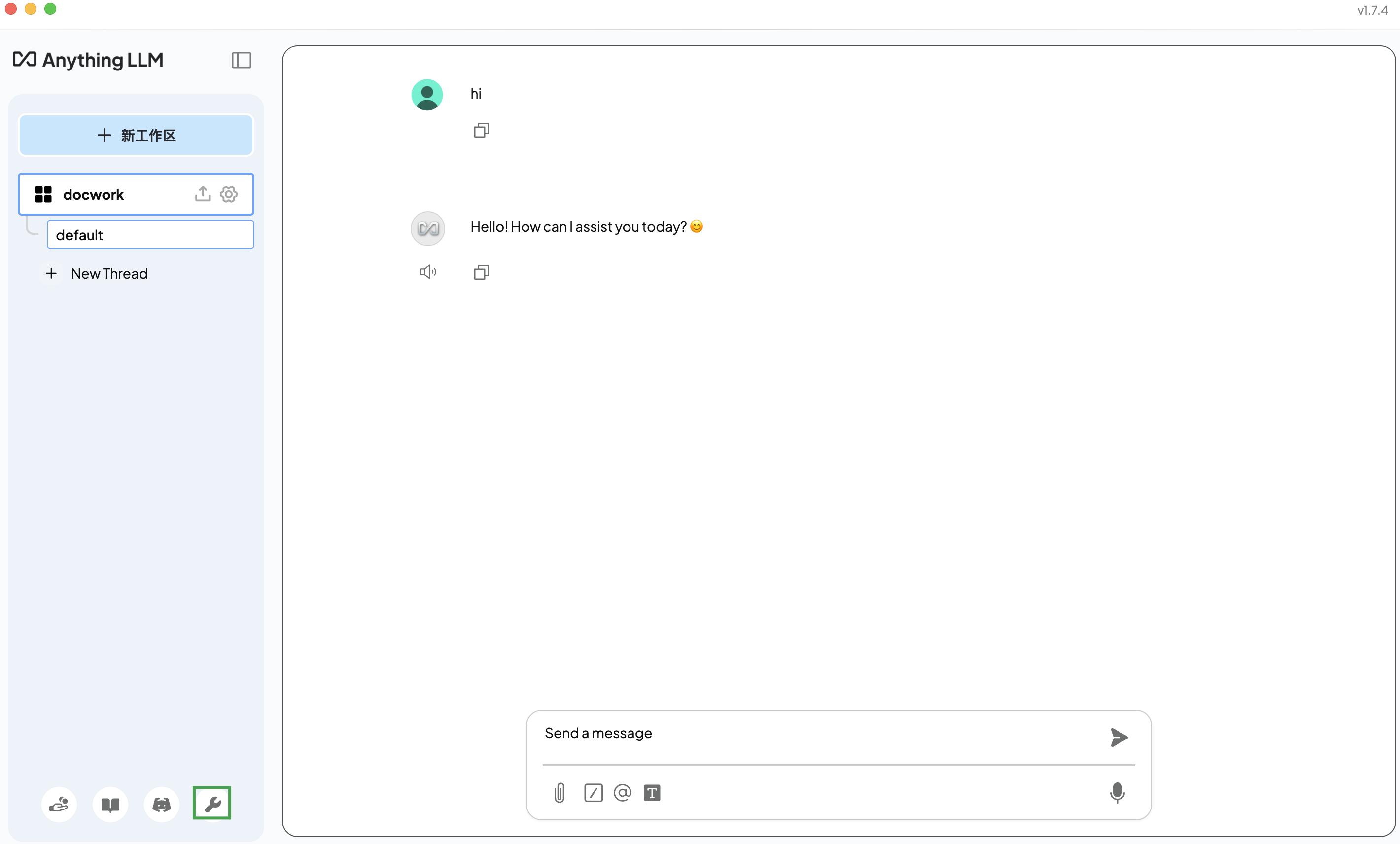

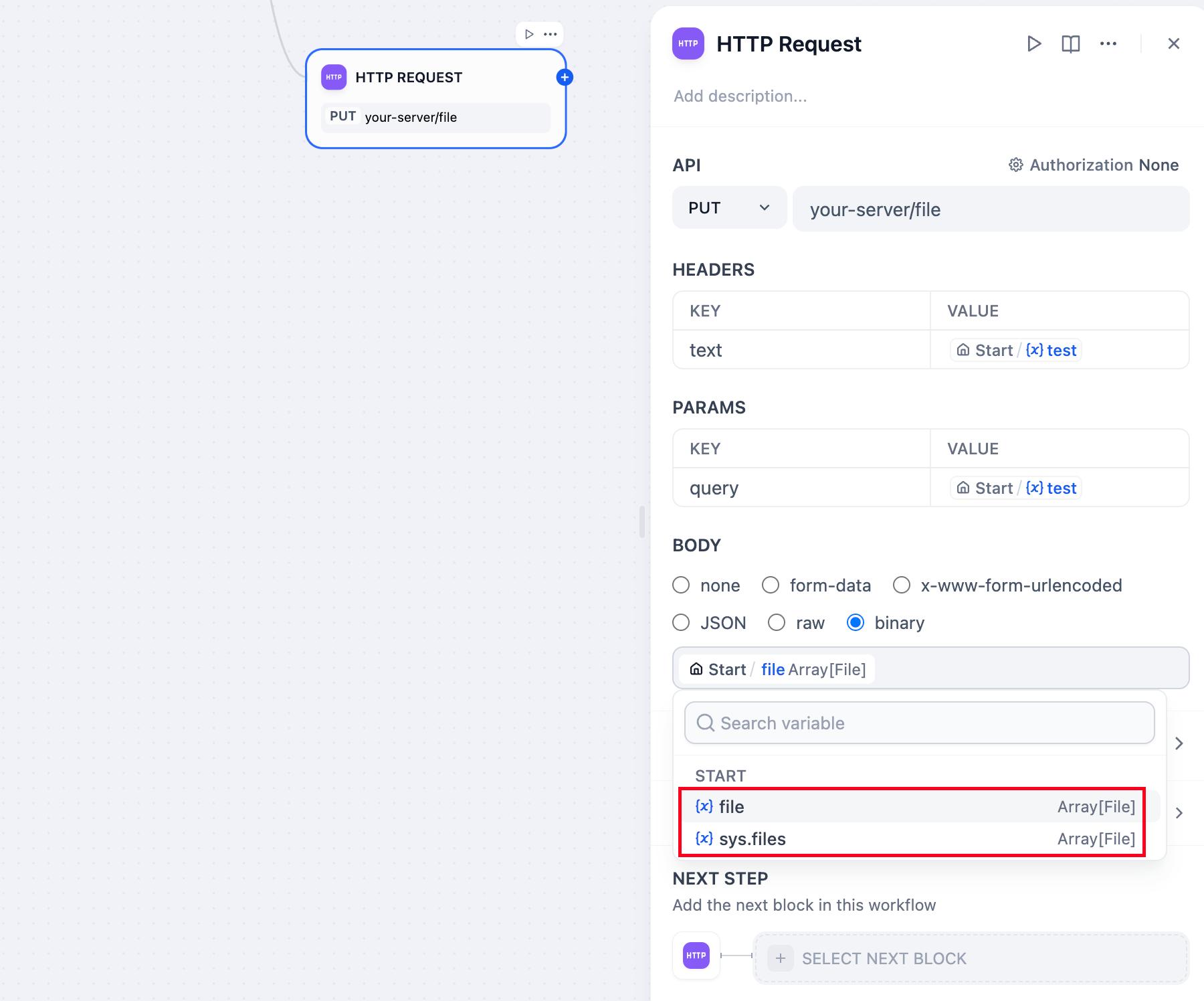

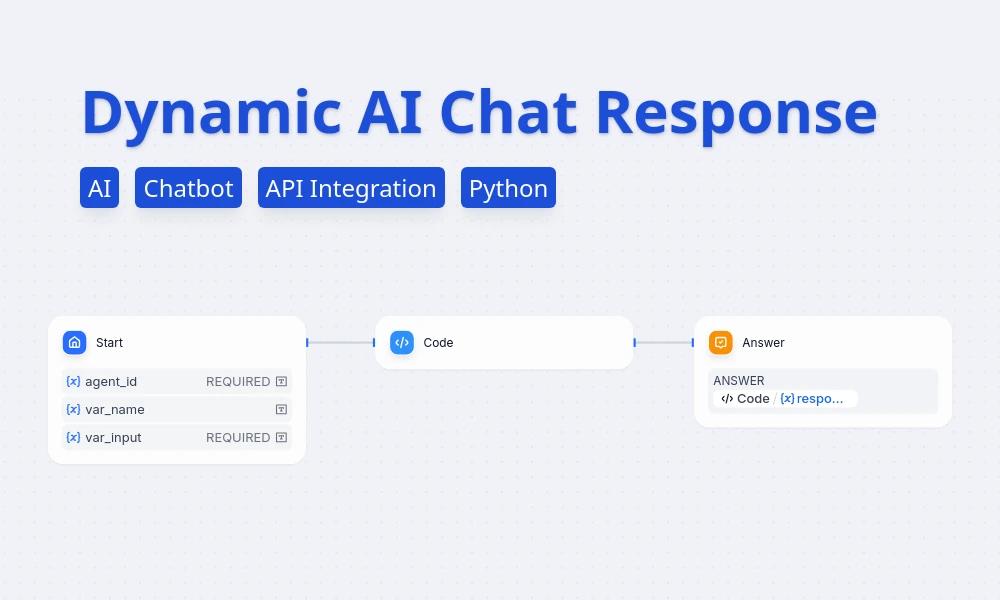

What the screenshot shows:

Dify’s orchestration-first interface, where RAG is part of a broader pipeline including prompts, tools, and workflow logic. It looks less like a document tool and more like an AI application builder.

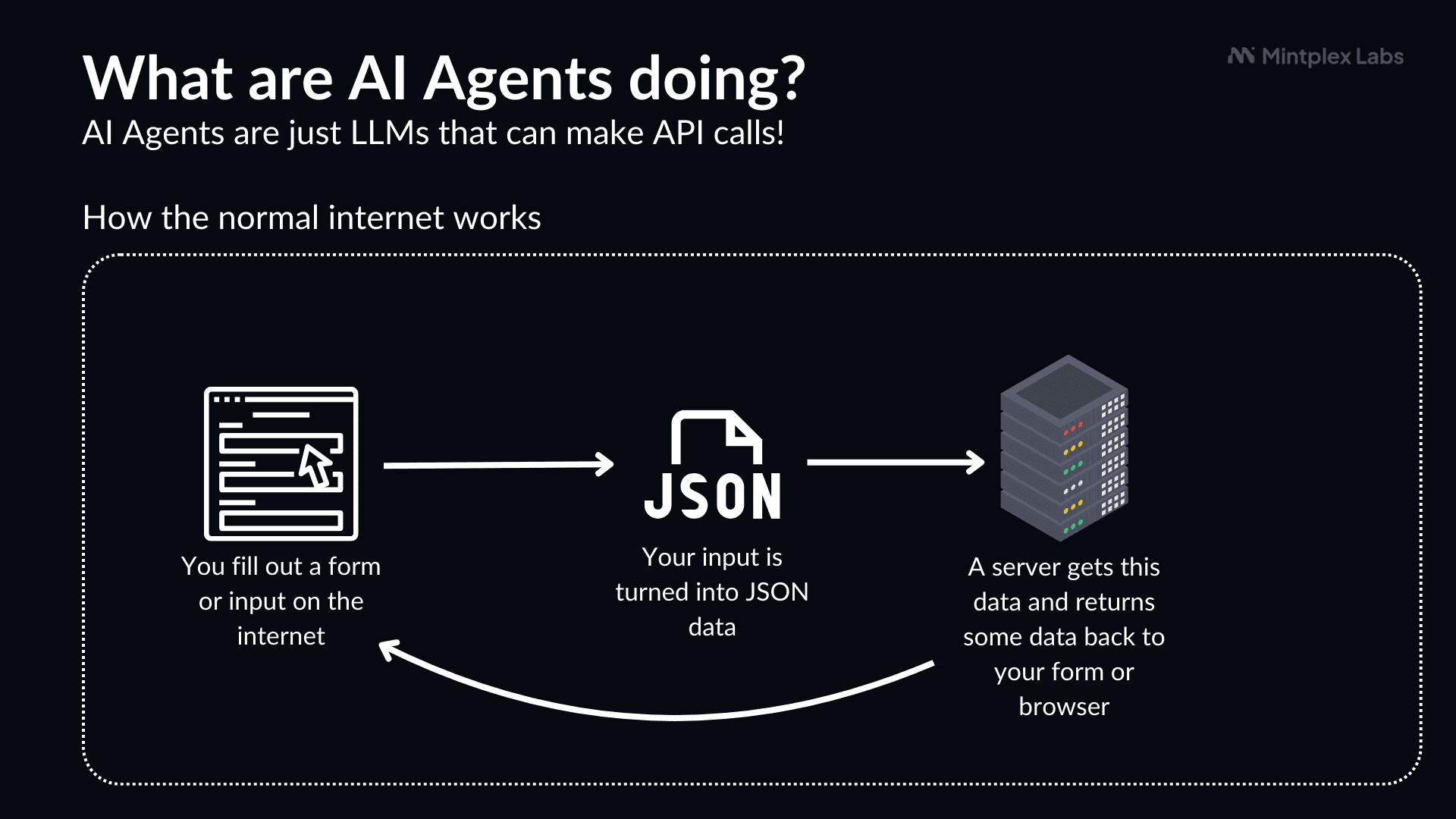

Here’s the core difference:

| Dimension | AnythingLLM | Dify |

|---|---|---|

| Mental model | Chat + documents | AI pipelines + apps |

| Setup complexity | Low | Medium–high |

| Flexibility | Limited but focused | High |

| Enterprise extensibility | Moderate | Strong |

AnythingLLM is a controlled environment.

Dify is a framework.

LDAP / SSO Integration (Where Enterprise Reality Starts)

If your tool doesn’t integrate with your identity system, it’s not enterprise-ready. It’s a sandbox.

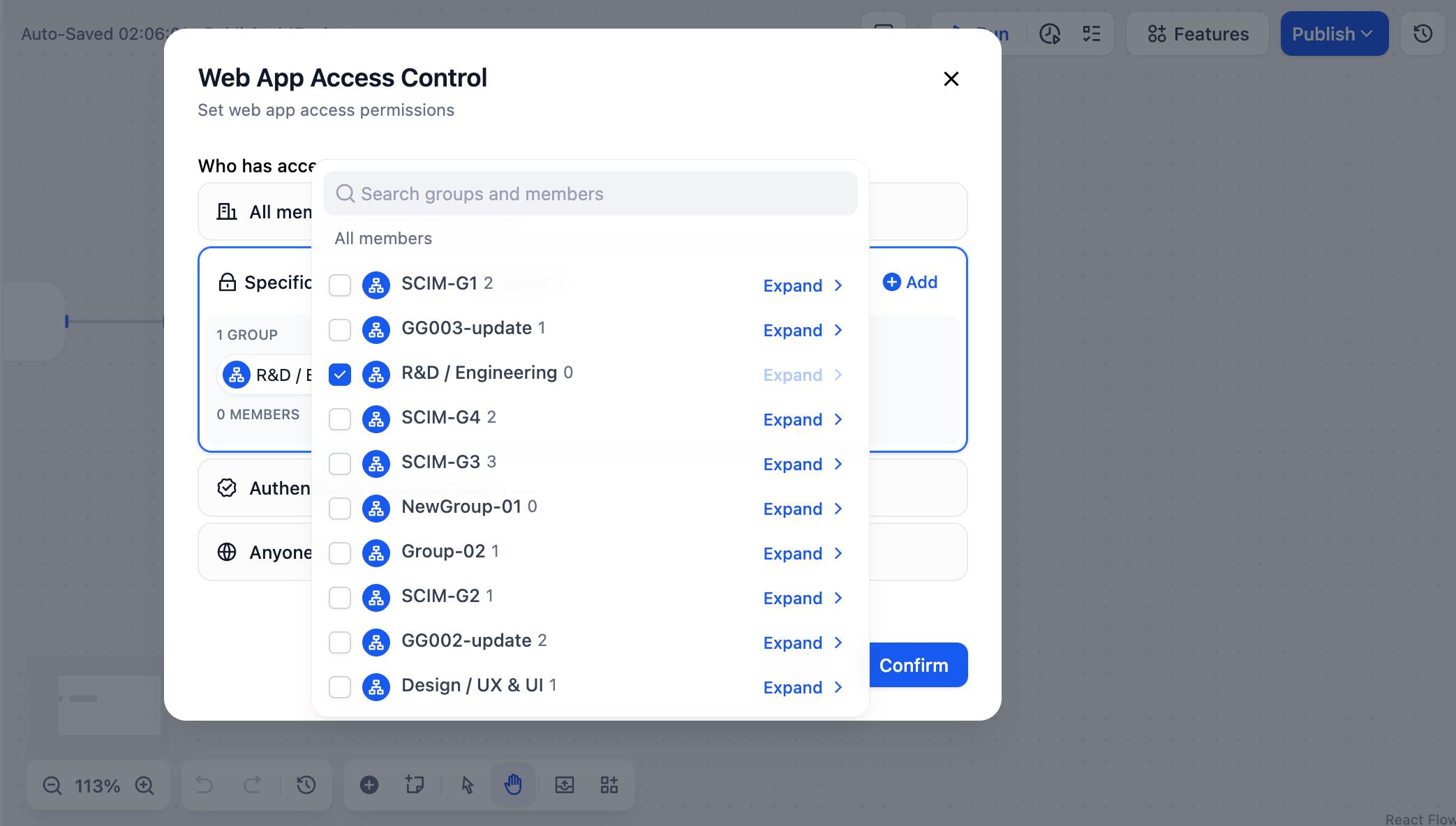

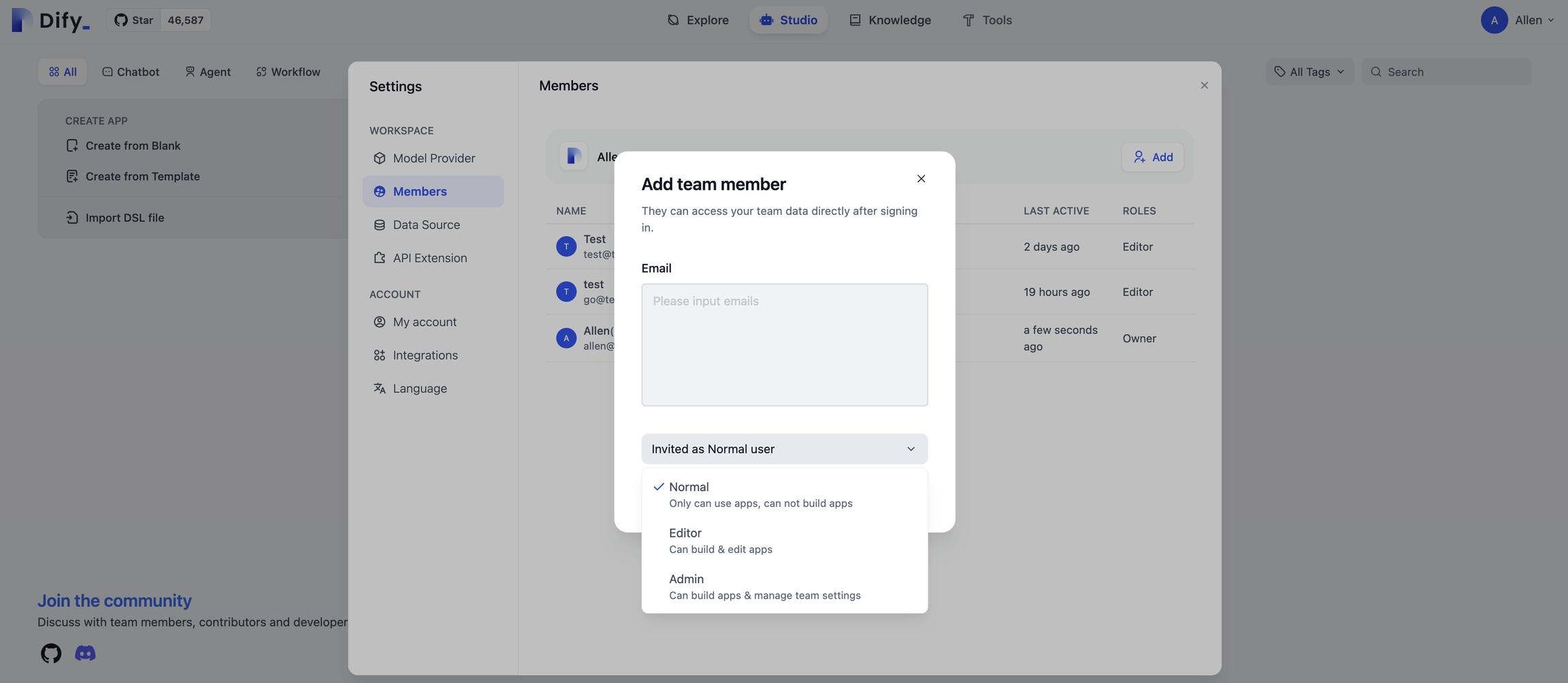

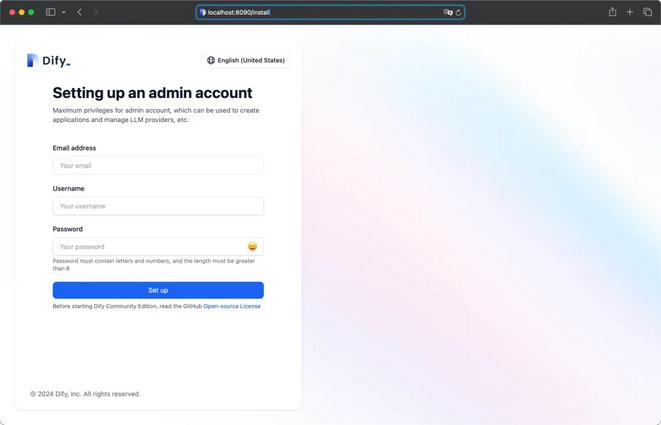

Dify Authentication & Access Control

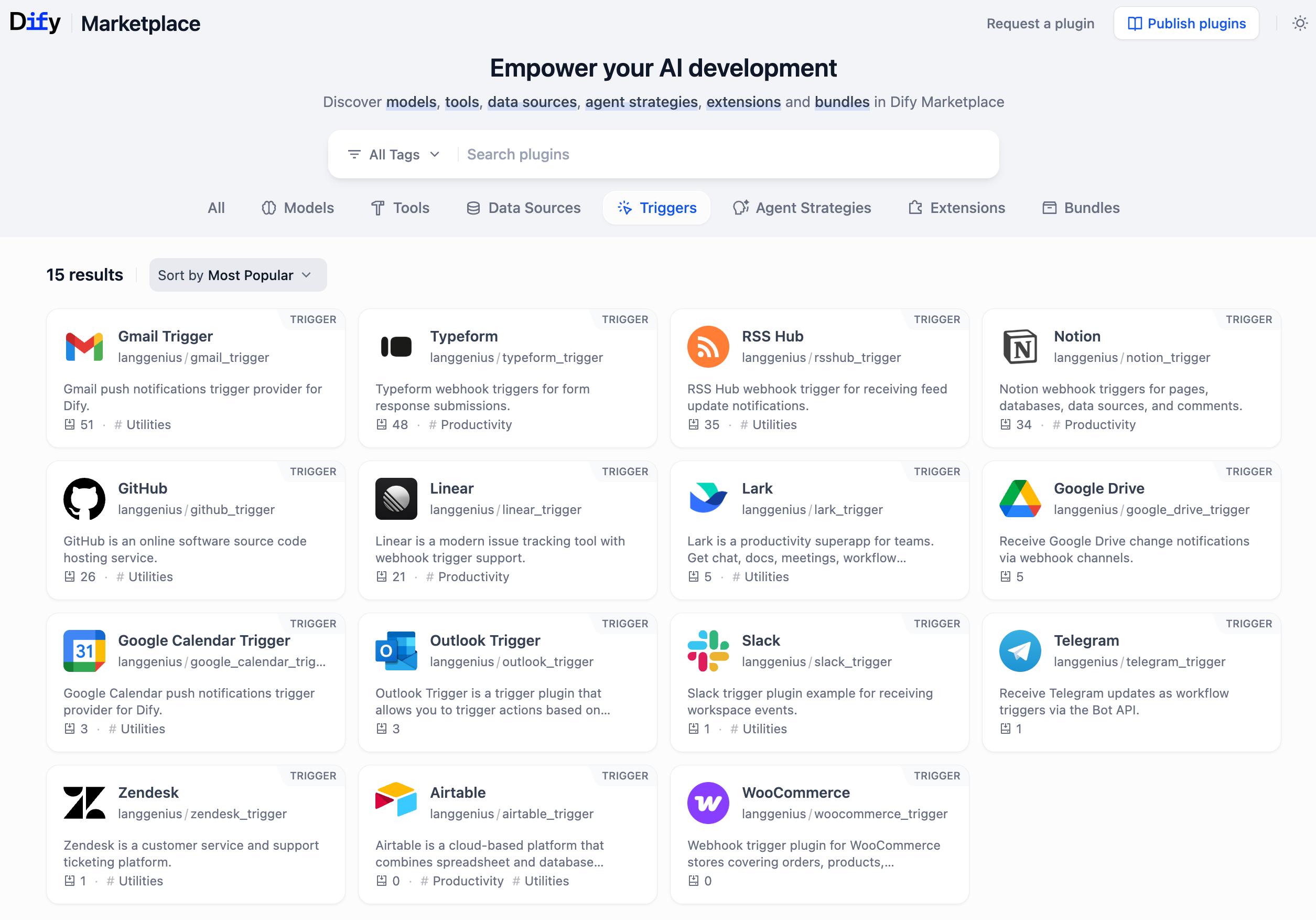

What the screenshot shows:

Dify’s authentication layer with support for OAuth, SSO providers, and role-based access control. You can map users to roles and restrict access to apps or datasets.

Dify is built with this in mind. You can integrate:

- OAuth providers (Google, Azure AD, Okta)

- SSO flows

- role-based permissions

LDAP is not always plug-and-play, but through SSO providers, it becomes manageable.

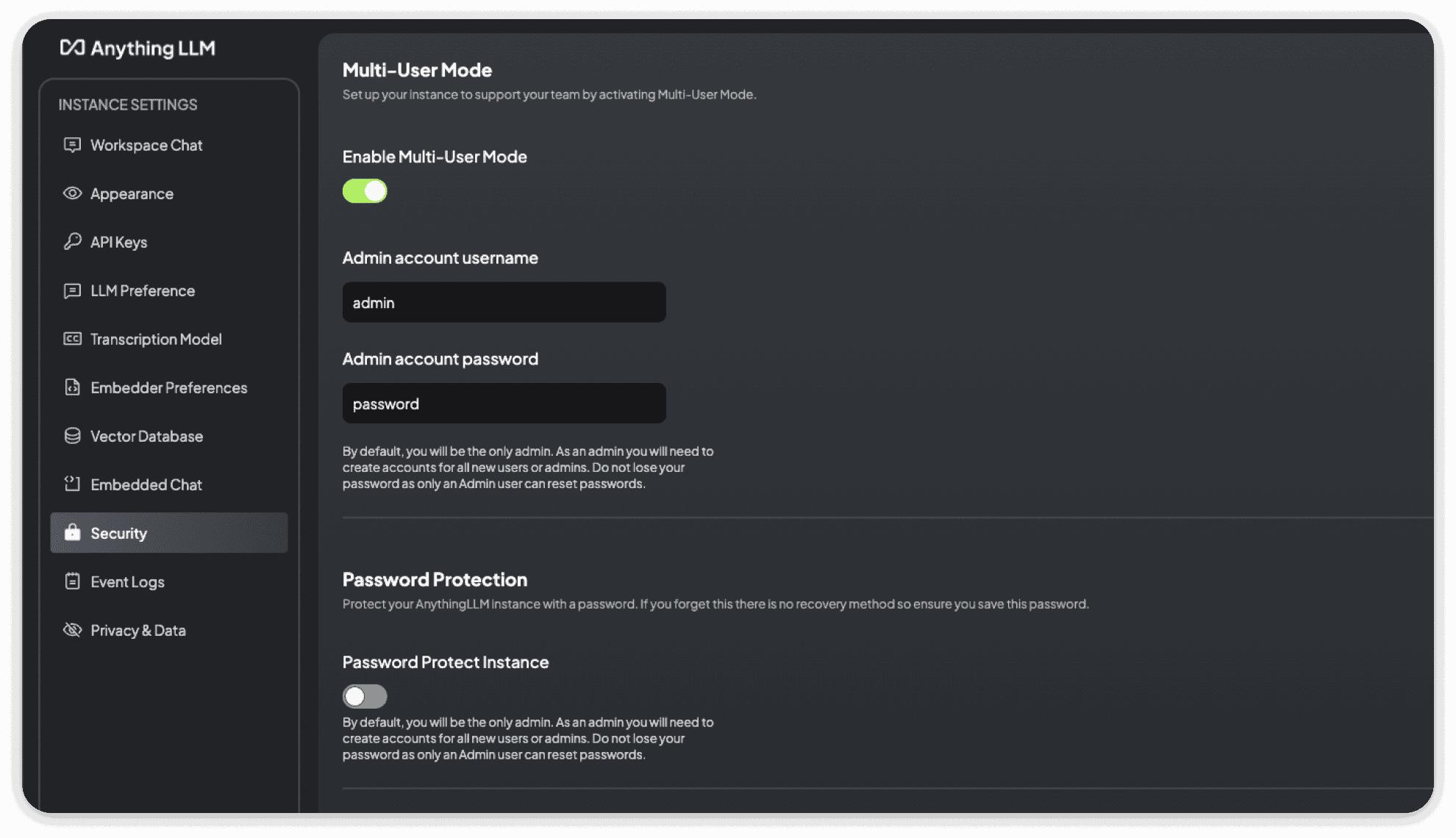

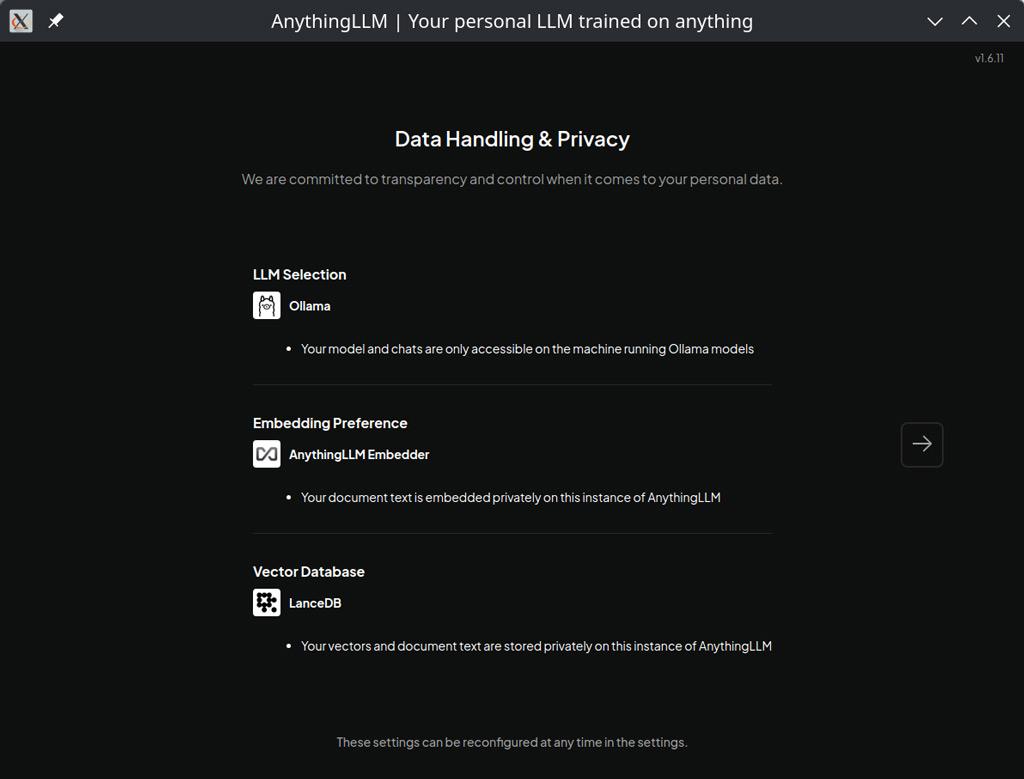

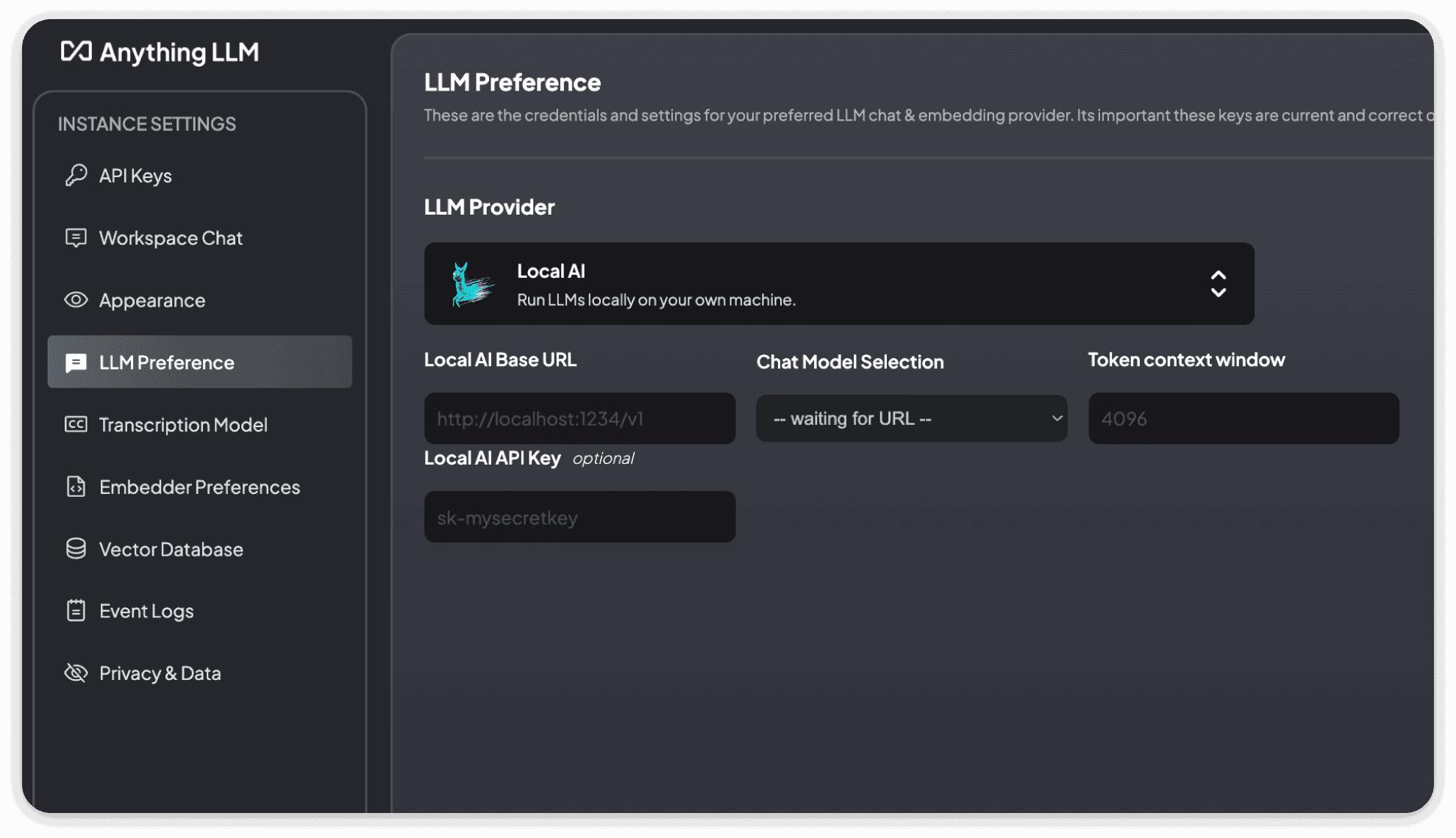

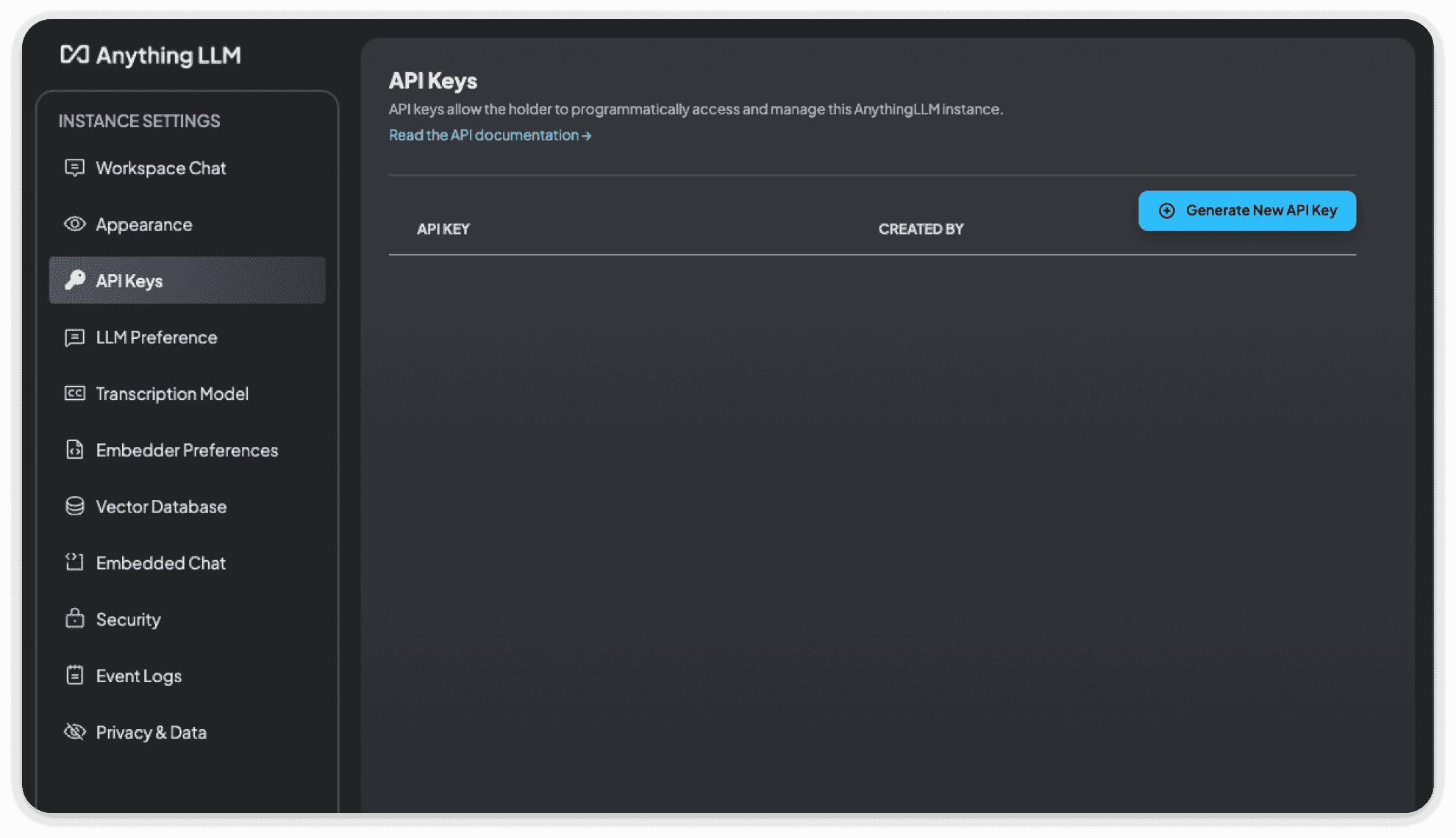

AnythingLLM Authentication Layer

What the screenshot shows:

AnythingLLM’s simpler authentication setup—local users, basic access control, and workspace-level permissions.

AnythingLLM is much simpler.

- local user management

- basic access control

- limited enterprise identity integration

You can integrate it behind a reverse proxy with SSO, but that’s not native. That’s infrastructure work.

SSO Reality Check

| Requirement | AnythingLLM | Dify |

|---|---|---|

| Native SSO | No | Yes |

| LDAP integration | Indirect | Via SSO |

| Role-based access | Basic | Advanced |

| Multi-tenant control | Limited | Strong |

If identity and access control matter—and they always do in enterprise—Dify is ahead.

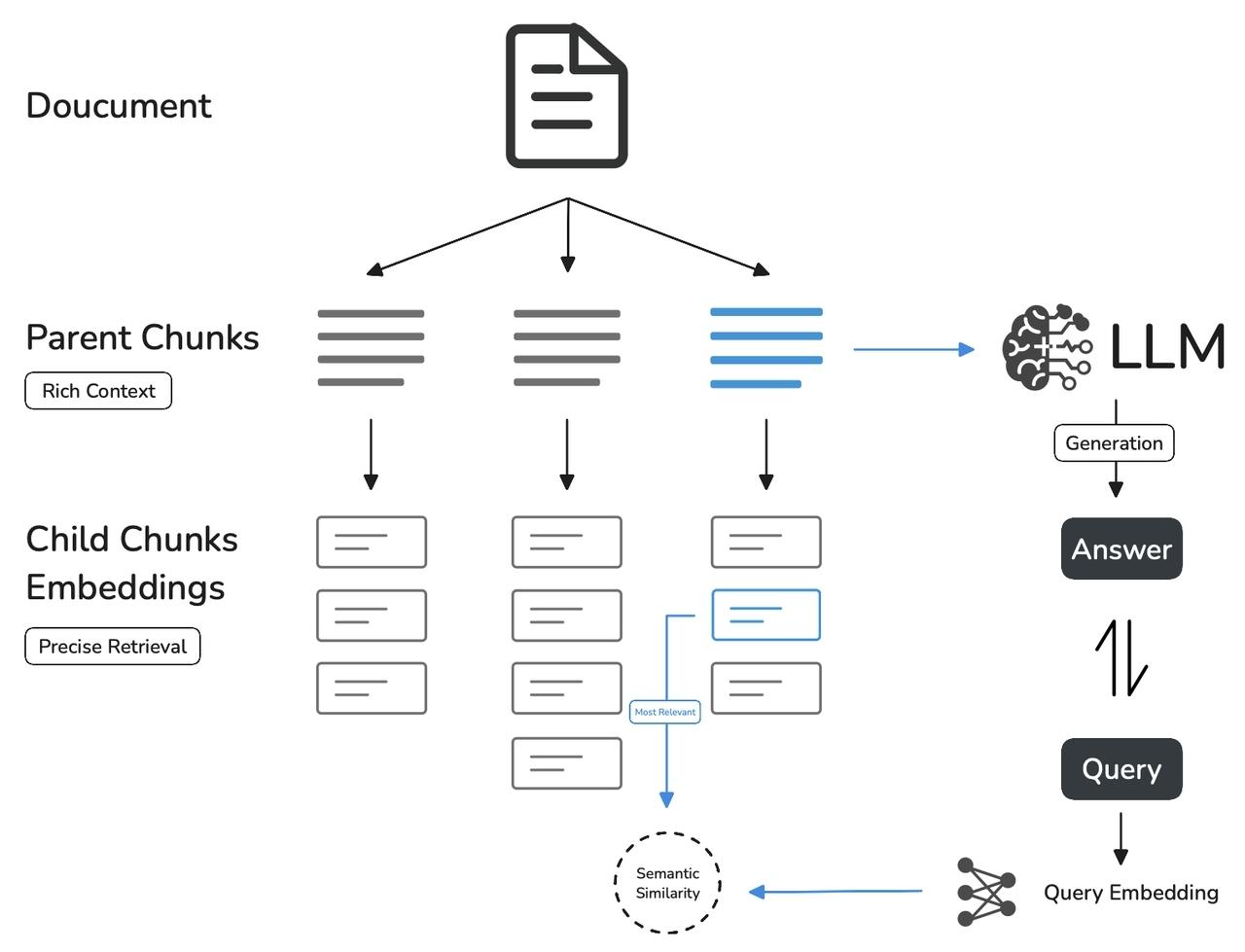

PDF Chunking & Embeddings (Where RAG Actually Lives or Dies)

Everyone talks about “RAG quality.”

Almost nobody talks about chunking strategy.

That’s the difference between:

- useful answers

- and confident nonsense

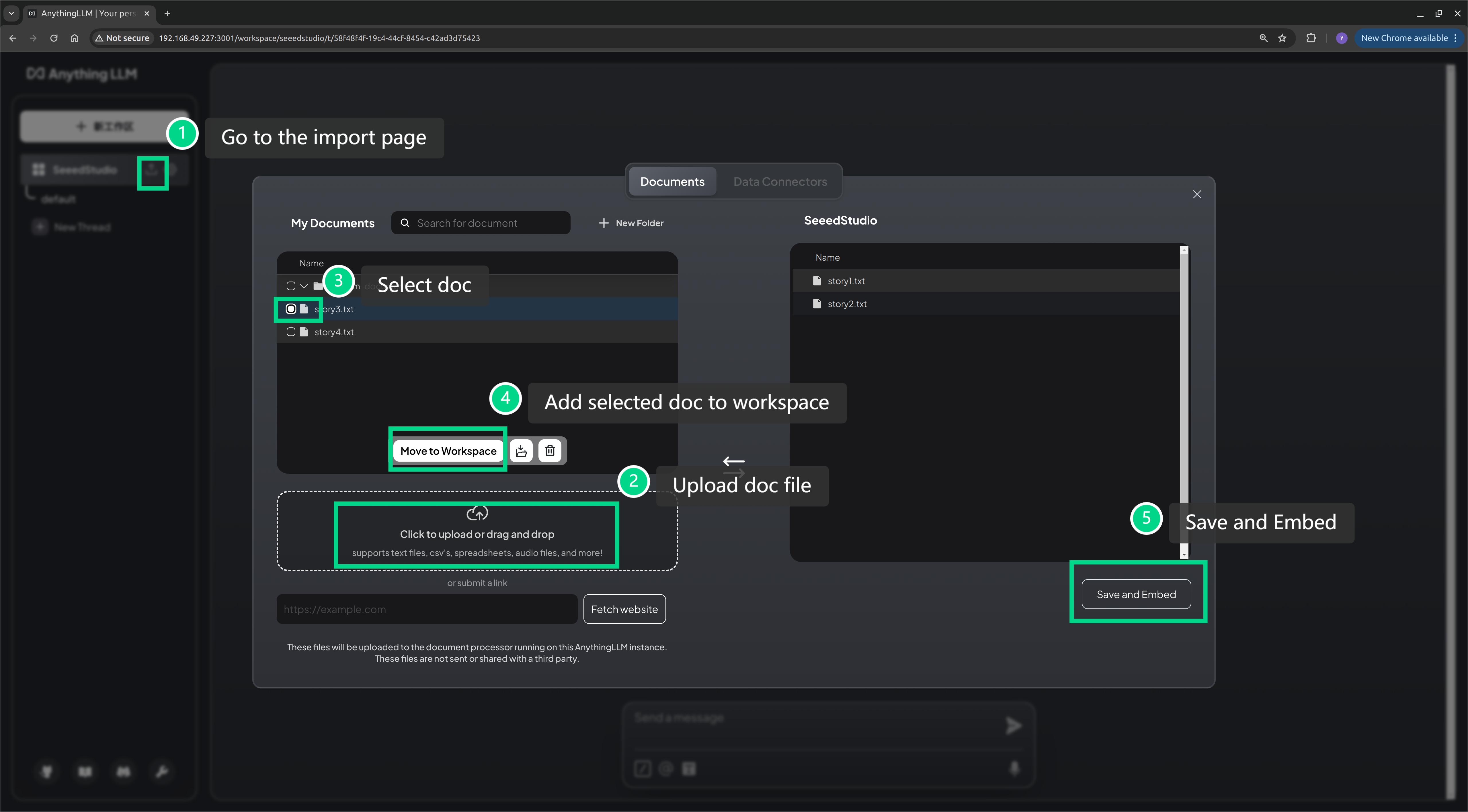

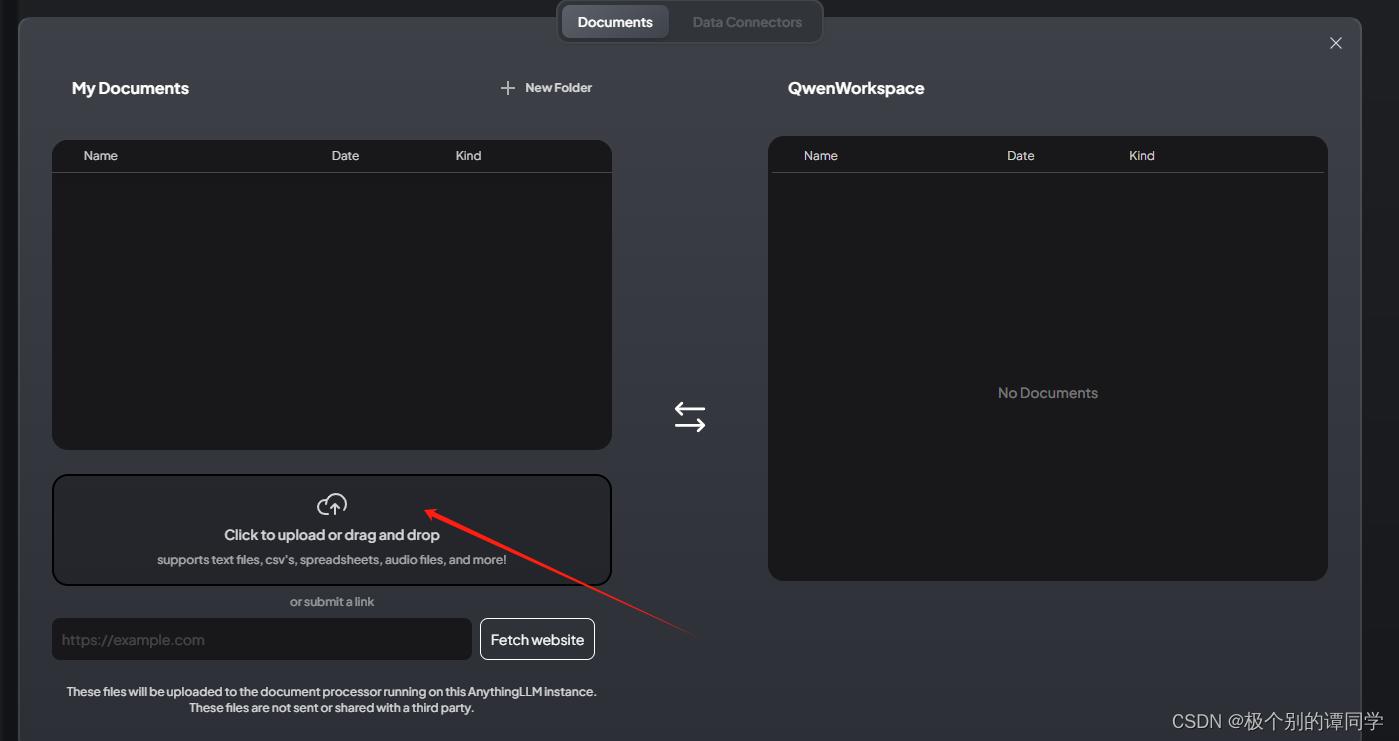

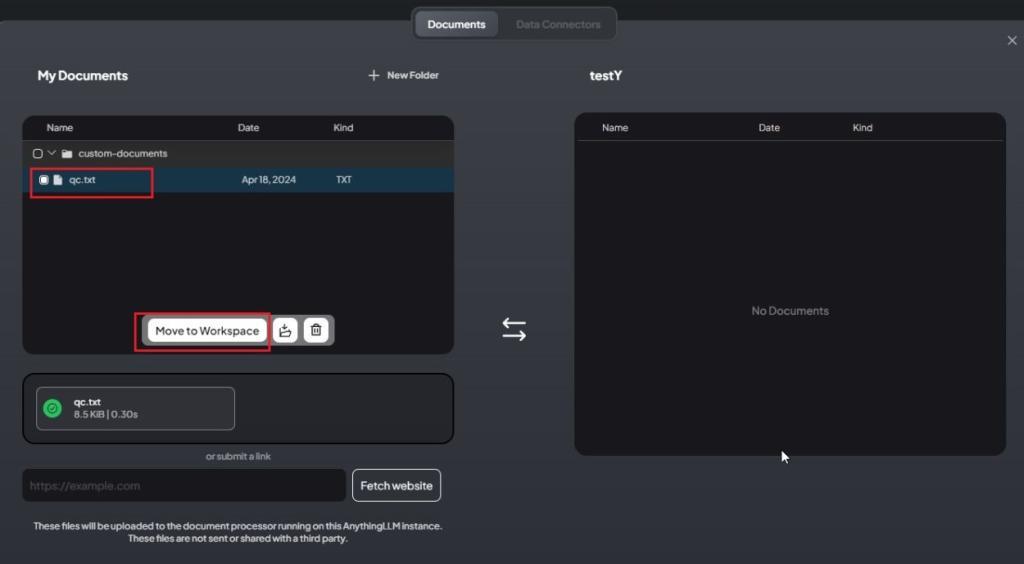

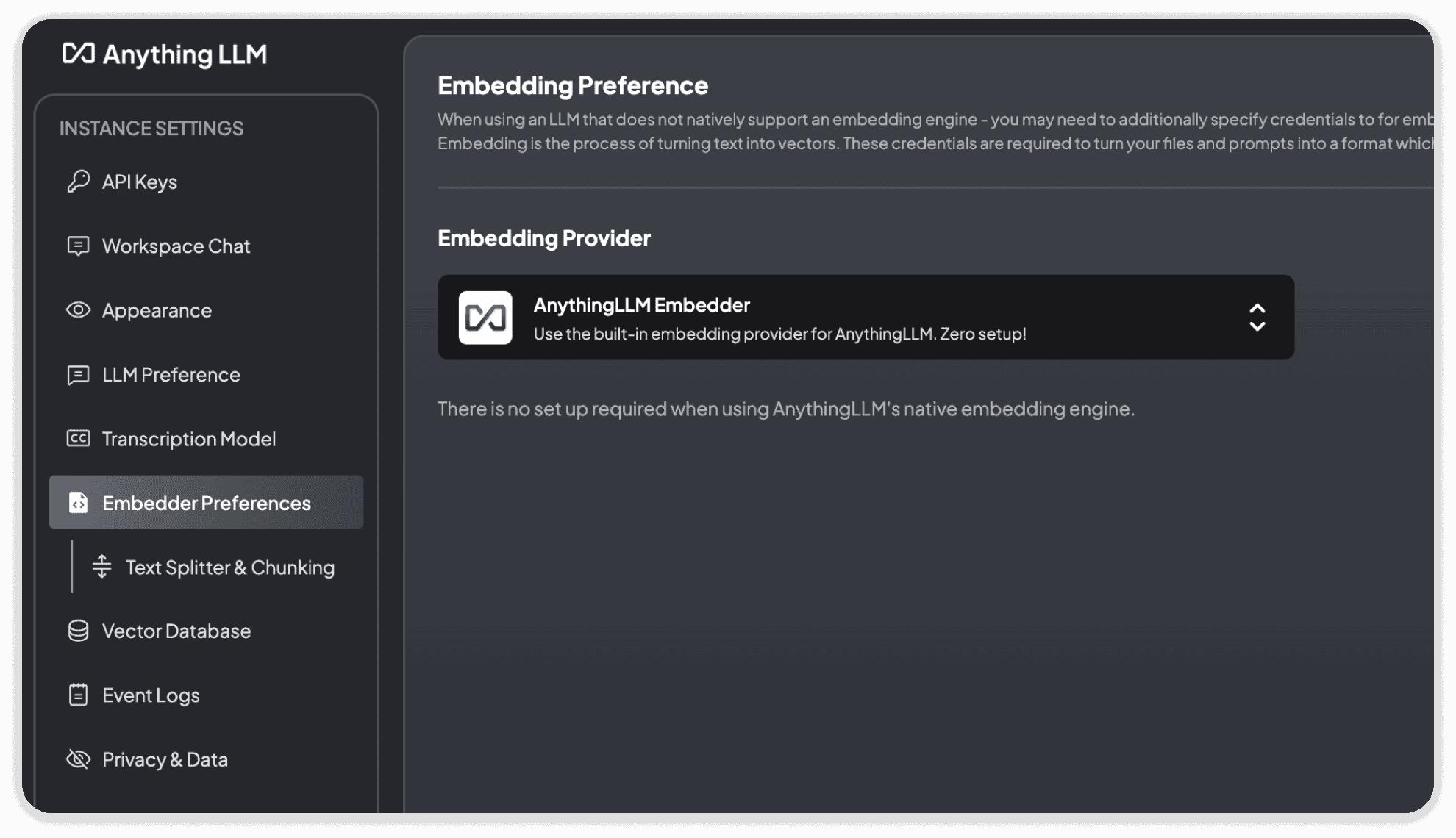

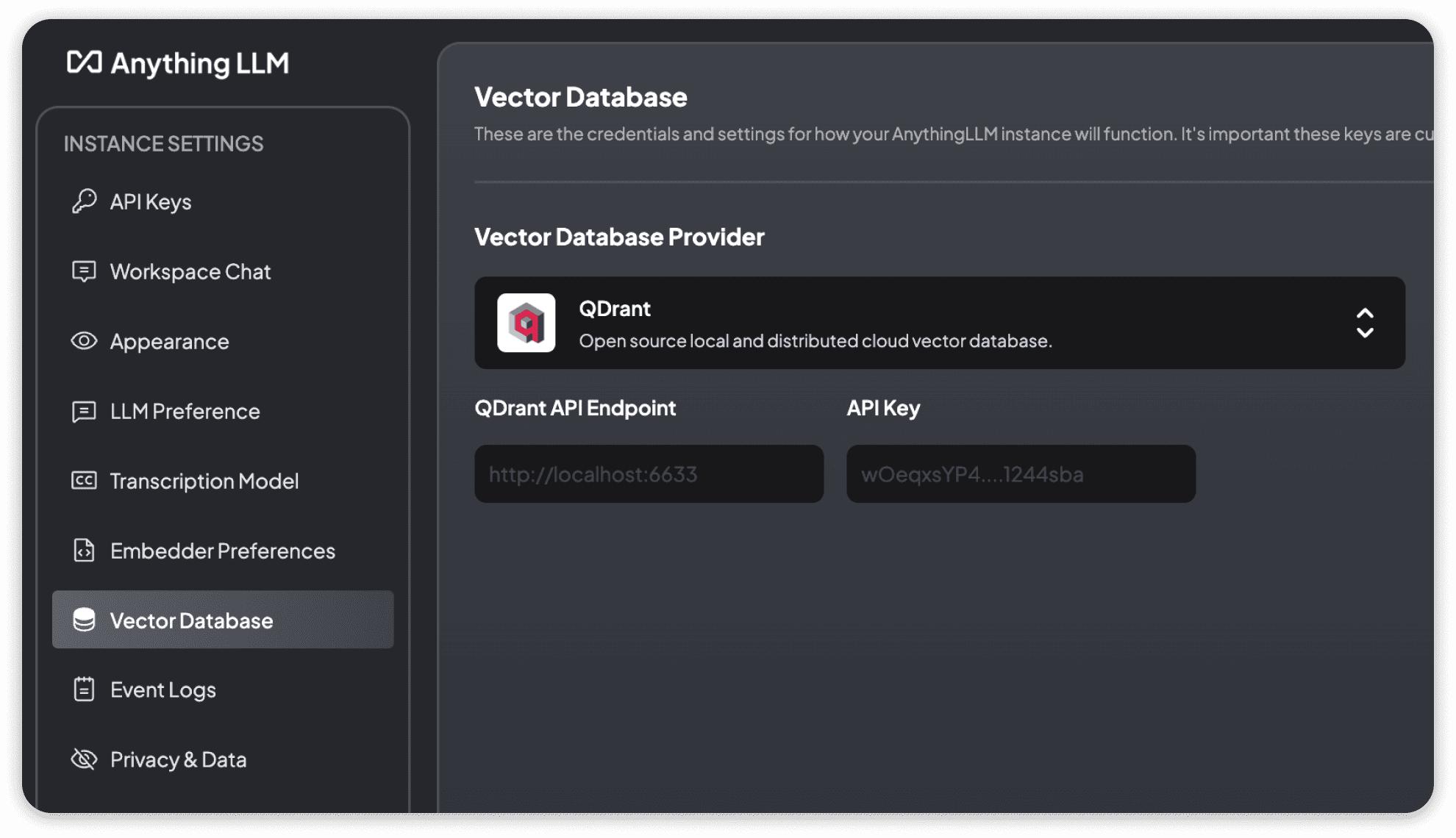

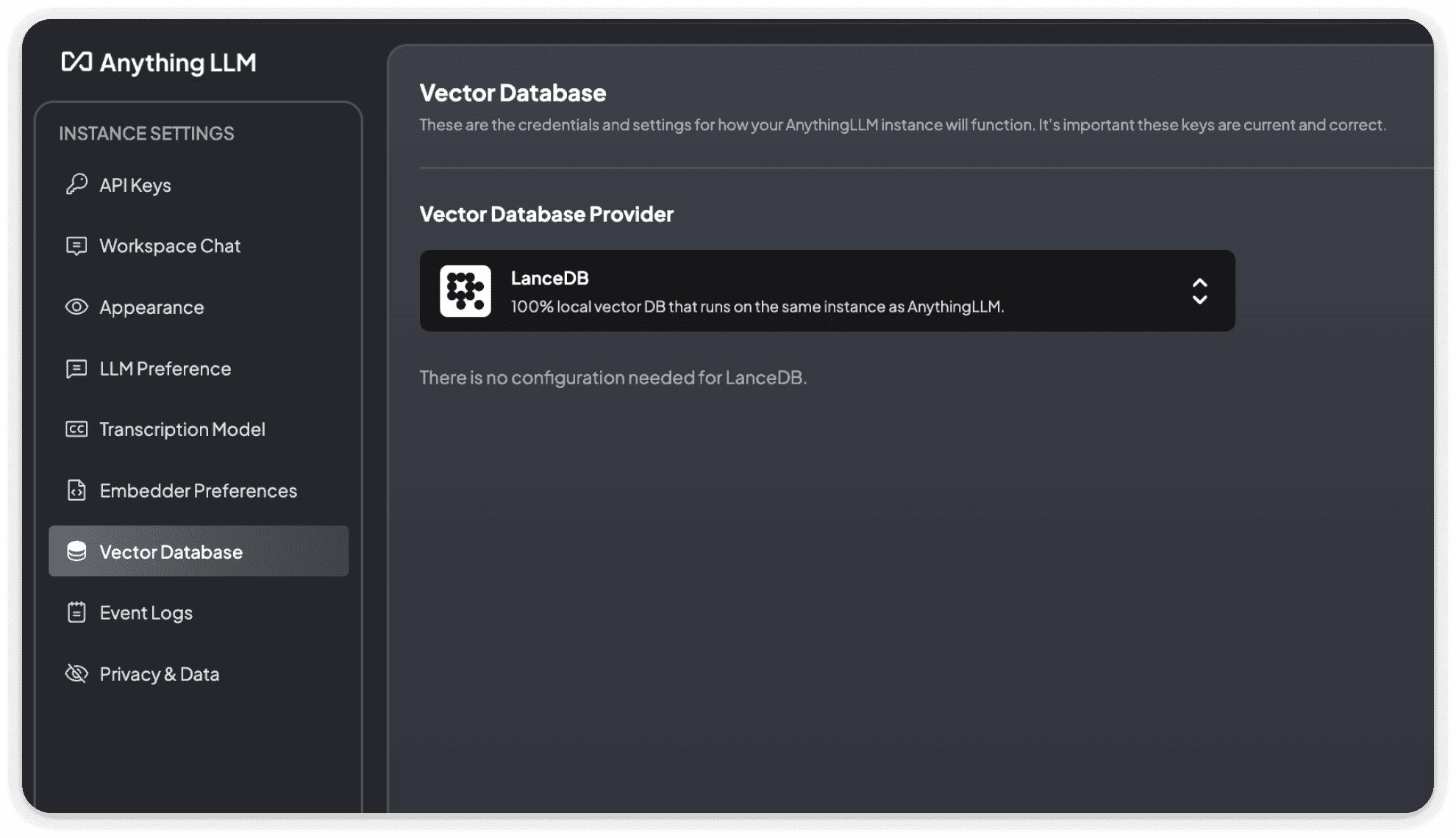

AnythingLLM Document Processing

What the screenshot shows:

AnythingLLM’s ingestion pipeline—documents are uploaded, chunked automatically, and embedded into a vector store with minimal configuration.

AnythingLLM keeps it simple:

- fixed chunk sizes

- automatic embedding

- minimal tuning

That’s good for speed.

But not for control.

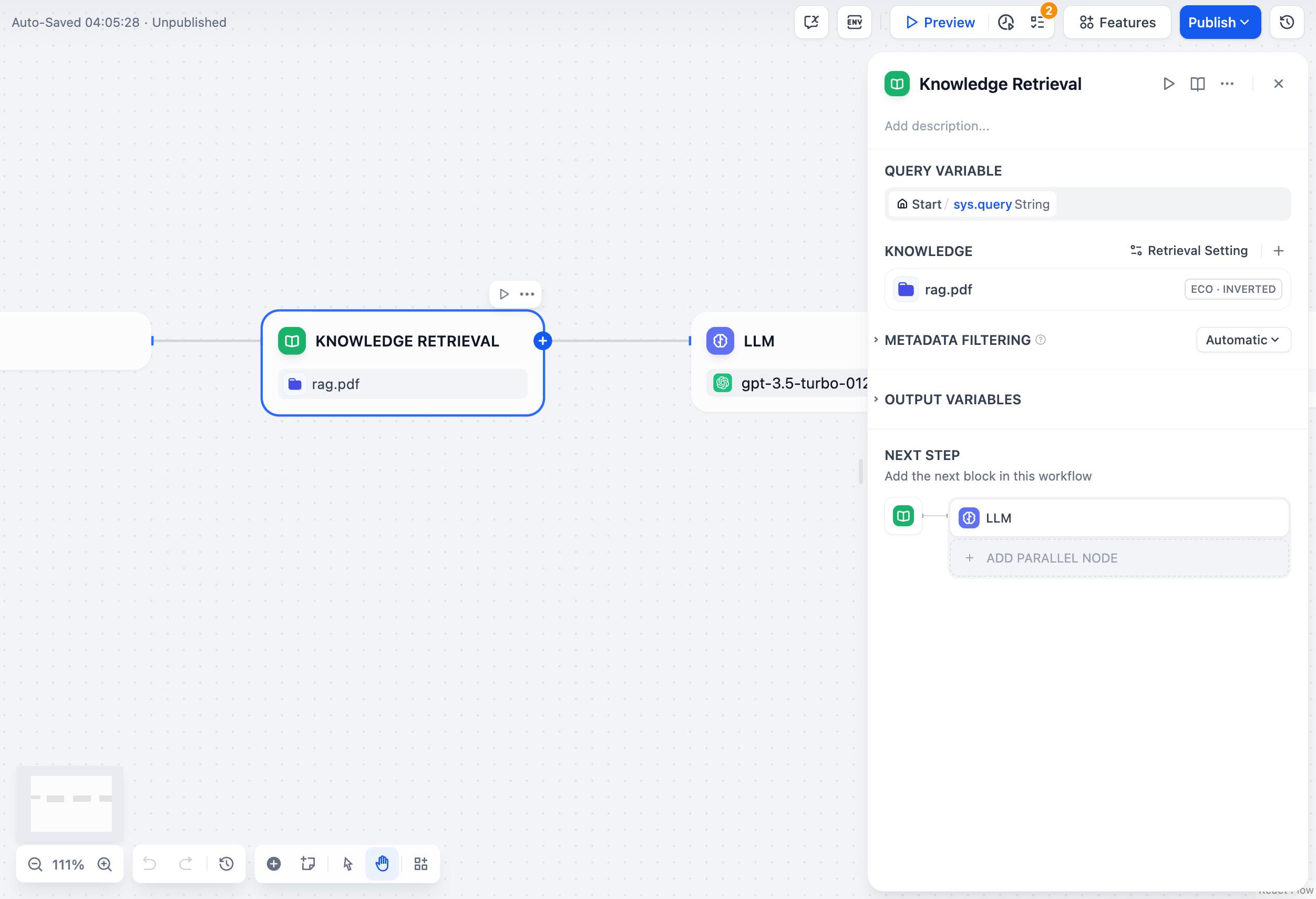

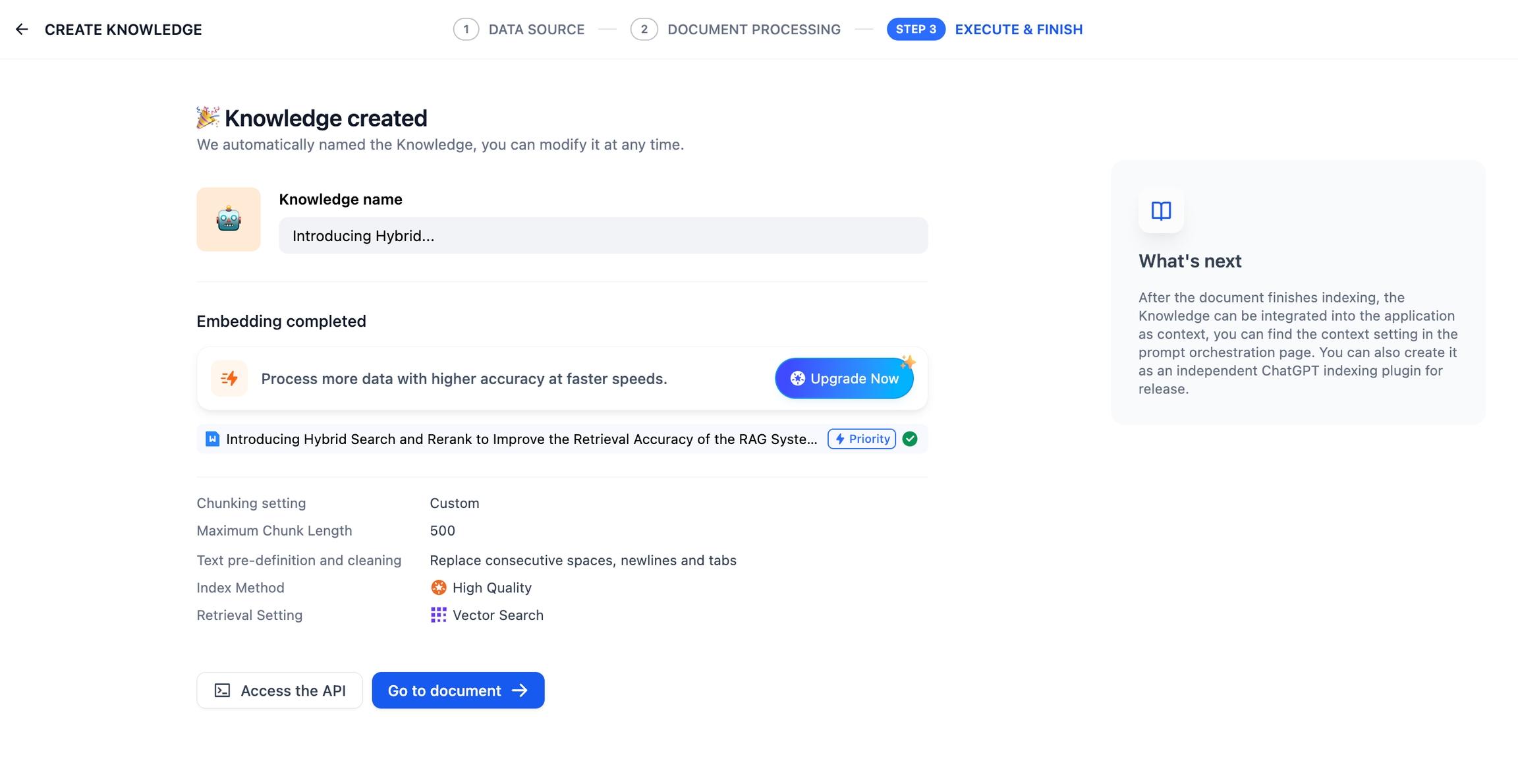

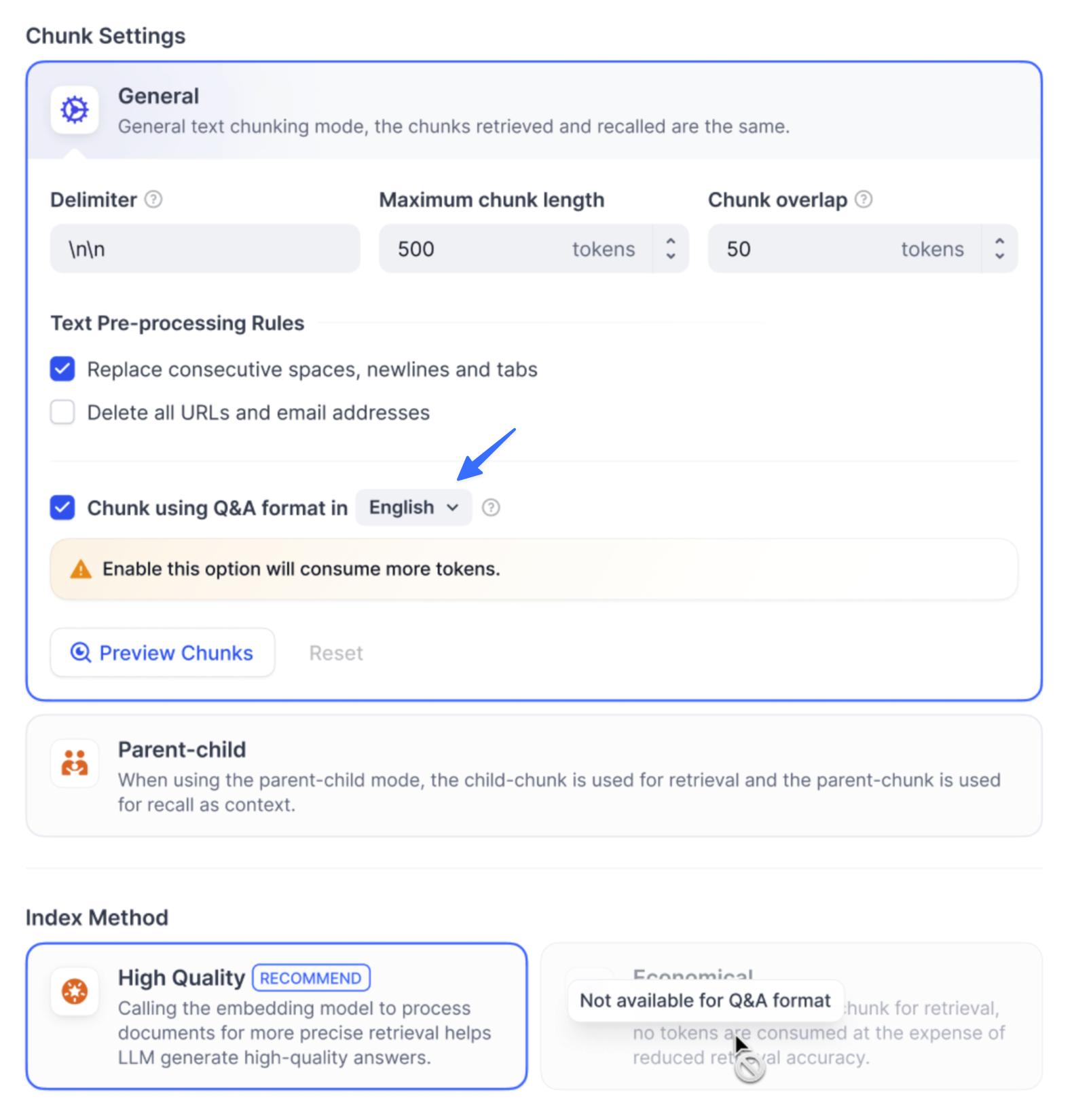

Dify Knowledge Base & Chunking Controls

What the screenshot shows:

Dify’s configurable chunking and embedding pipeline, including chunk size, overlap, and indexing strategies.

Dify gives you knobs:

- chunk size control

- overlap tuning

- embedding configuration

- indexing strategies

This matters when dealing with:

- long PDFs

- structured documents

- technical manuals

Why Chunking Is Not Optional Detail

Let’s make this concrete.

| Scenario | AnythingLLM Outcome | Dify Outcome |

|---|---|---|

| Short PDFs | Good | Good |

| Long reports | Context fragmentation | Tunable |

| Technical docs | Missed relationships | Better retrieval |

| Legal documents | Risky | Controlled |

If your documents are simple, AnythingLLM works fine.

If they’re complex, Dify gives you control you’ll eventually need.

Example: Chunking Impact

# Simplified conceptual difference

# AnythingLLM

chunks = split_text(document, size=500)

# Dify

chunks = split_text(document, size=800, overlap=200)

That overlap is the difference between:

- coherent answers

- broken context

API Endpoints (Where Automation Meets Reality)

This is where most comparisons fall apart.

Because the real question isn’t:

“Does it have an API?”

It’s:

“Can I reliably trigger this from another system?”

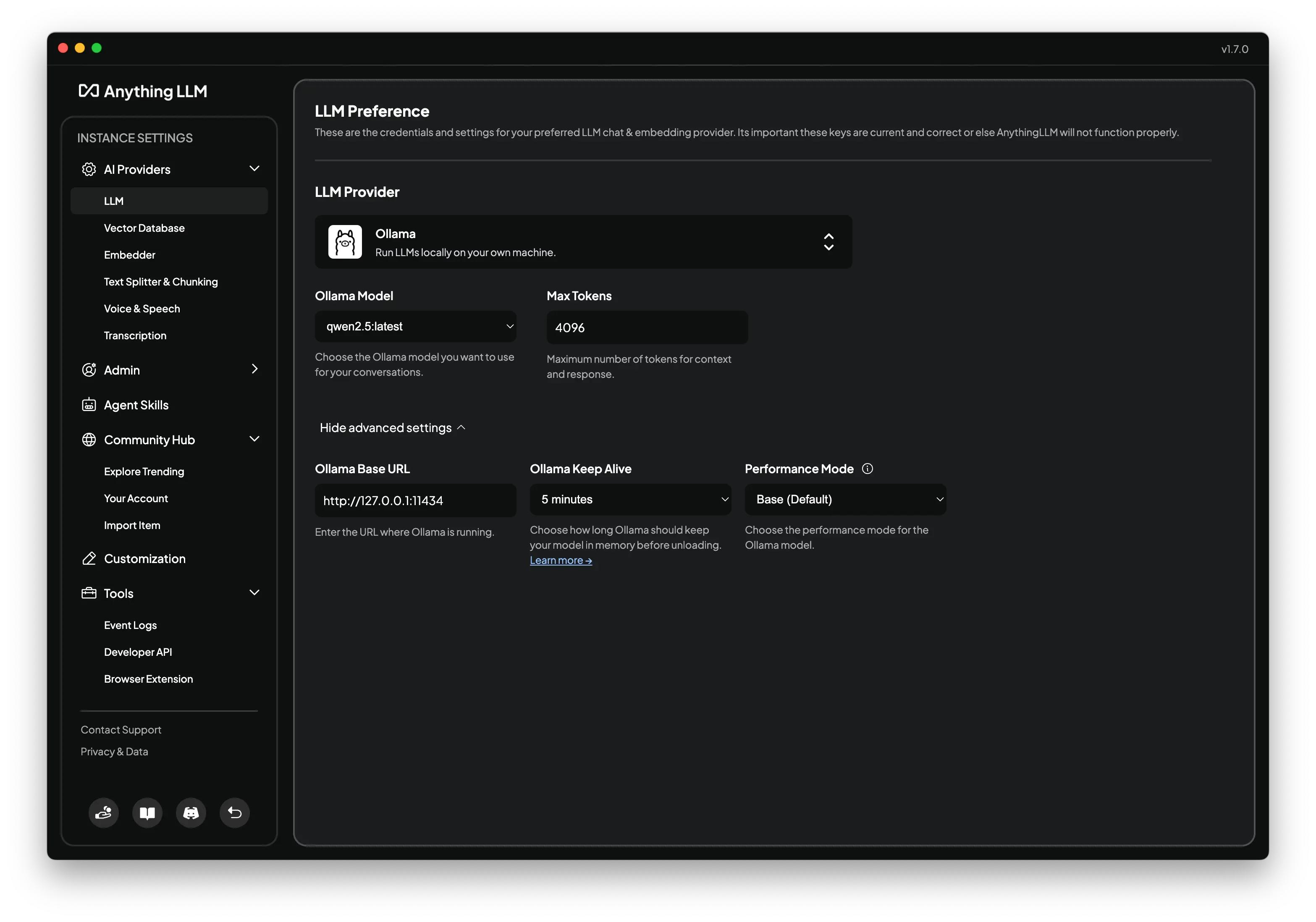

AnythingLLM API Usage

What the screenshot shows:

AnythingLLM’s API endpoint for querying a workspace—simple REST calls that return responses based on indexed documents.

AnythingLLM API is straightforward:

curl -X POST http://localhost:3001/api/chat \

-H "Content-Type: application/json" \

-d '{

"message": "Summarize document",

"workspace": "sales-docs"

}'

It works.

It’s simple.

But it’s limited.

You’re calling a chat interface, not orchestrating a system.

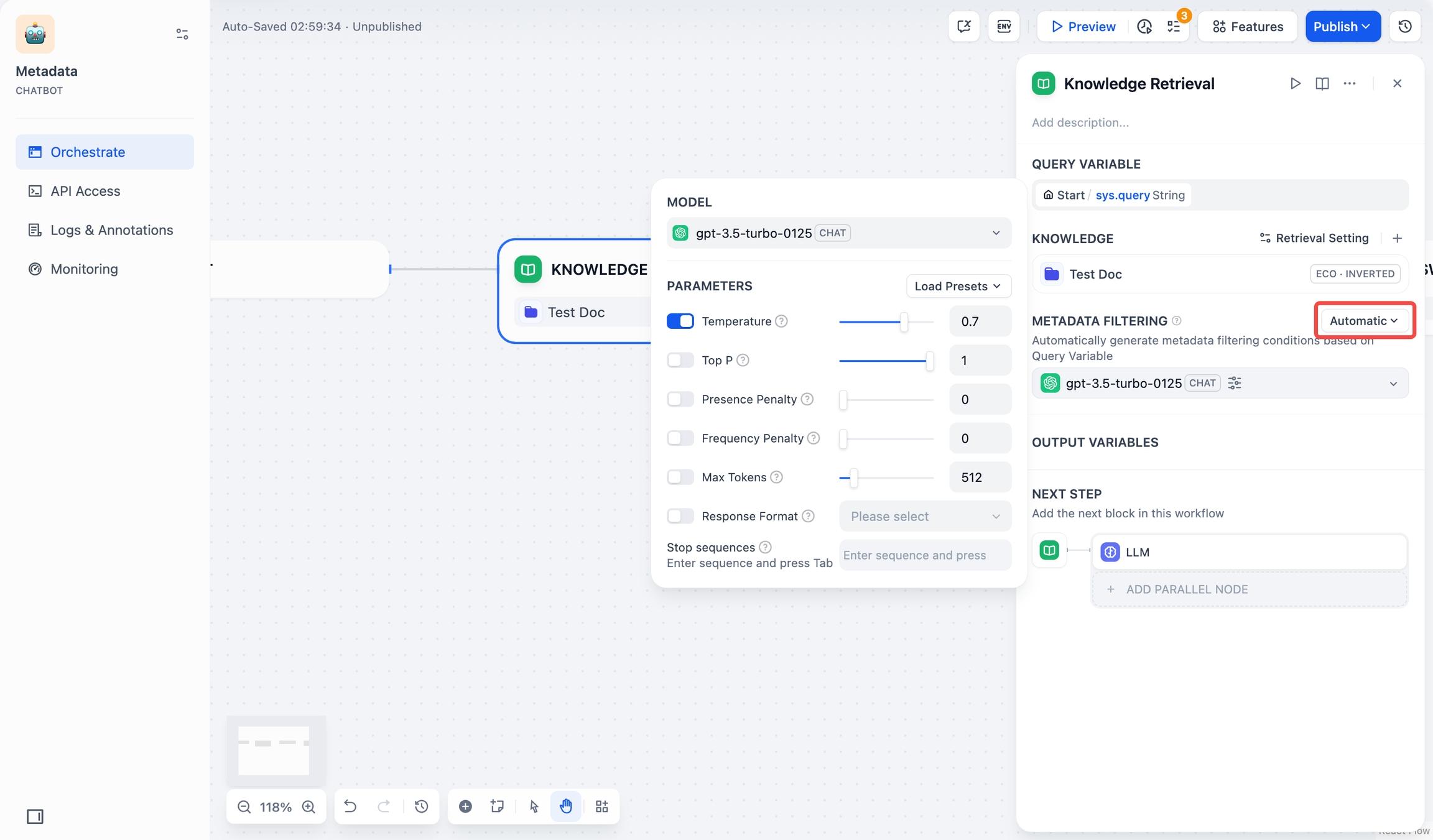

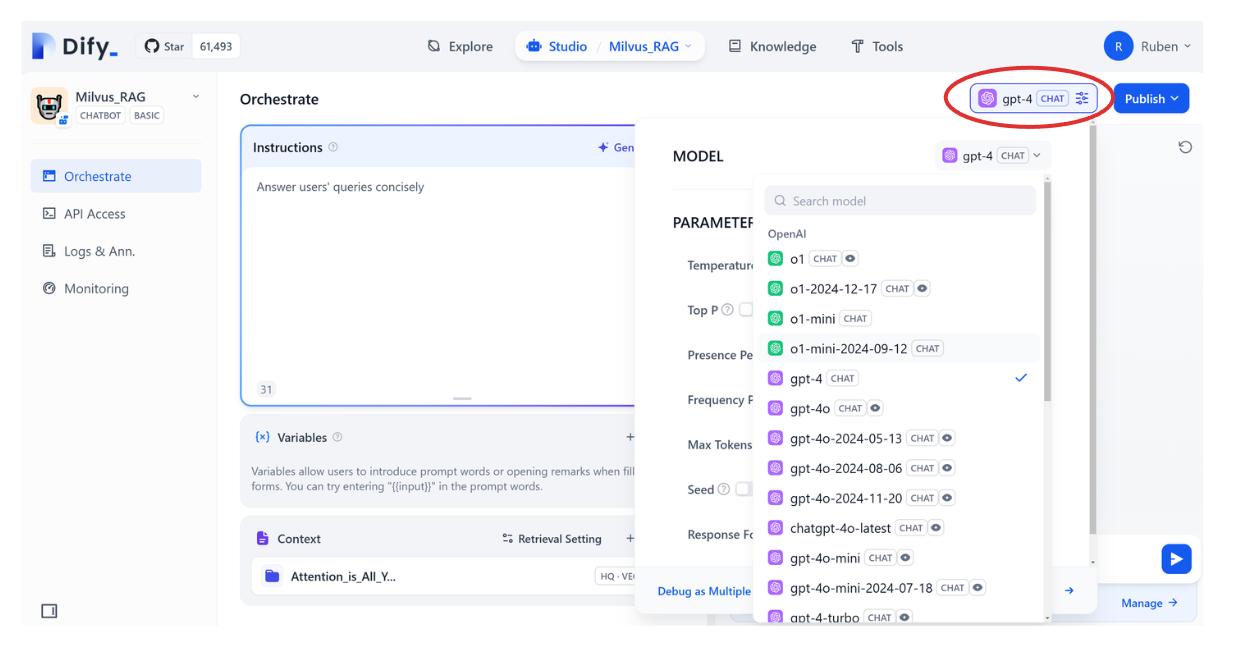

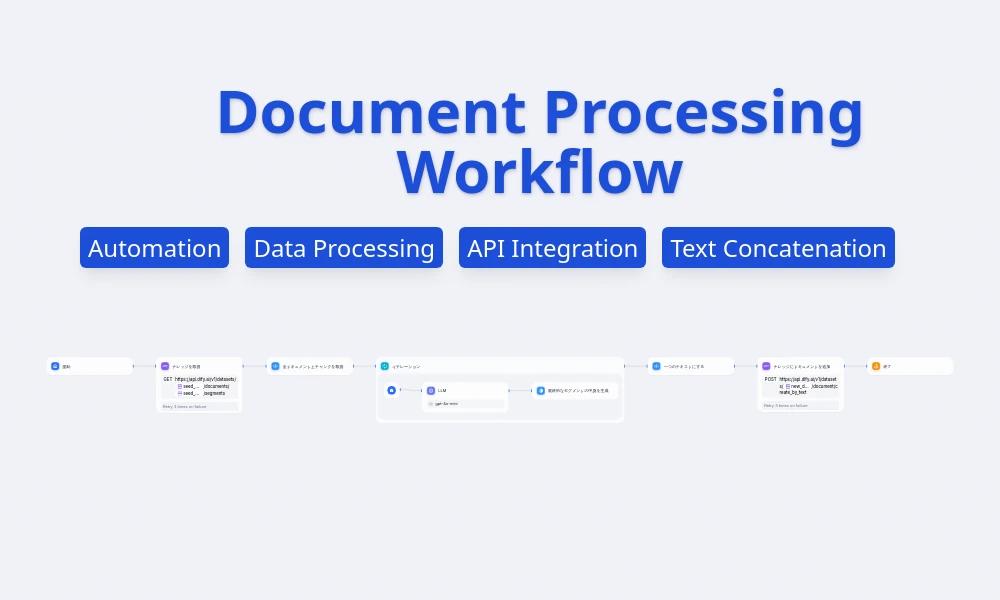

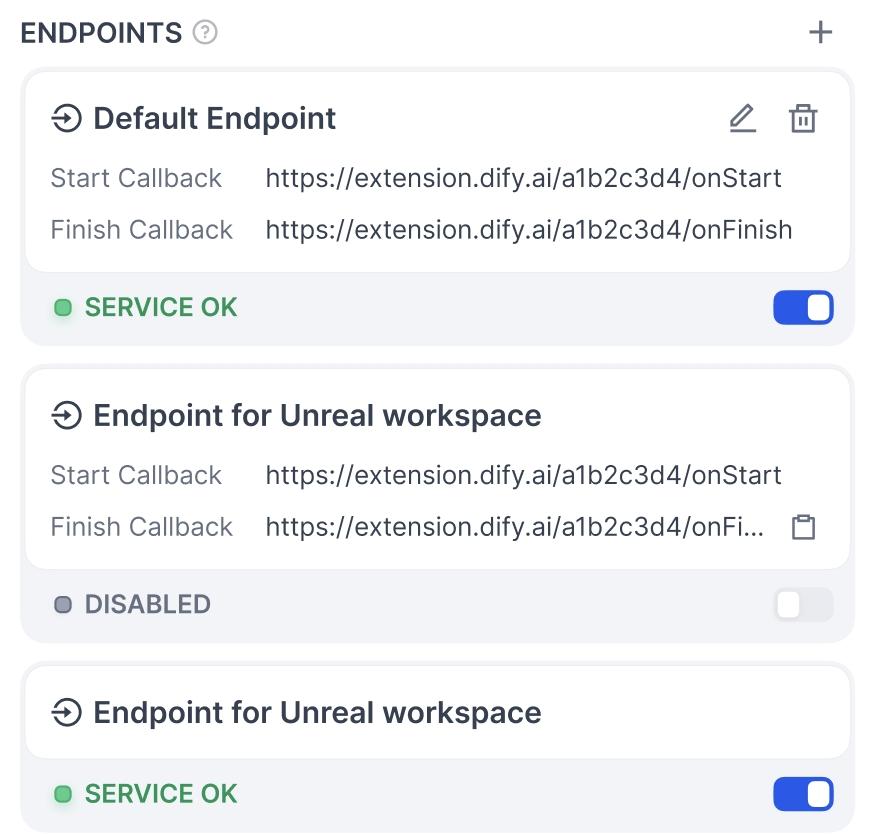

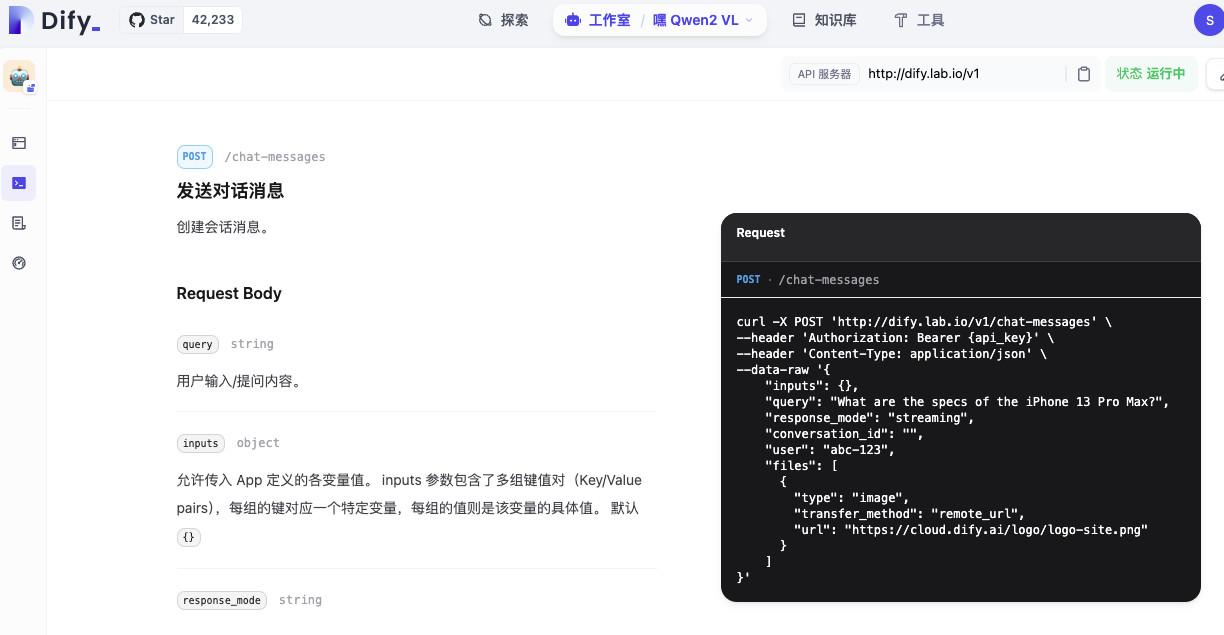

Dify API & Workflow Triggering

What the screenshot shows:

Dify’s API interface for triggering full workflows or apps, not just single queries—allowing integration into automation pipelines.

Dify treats APIs as first-class:

curl -X POST https://api.dify.ai/v1/chat-messages \

-H "Authorization: Bearer YOUR_API_KEY" \

-d '{

"query": "Analyze this document",

"inputs": {"doc_type": "legal"}

}'

But more importantly:

You can trigger entire pipelines, not just queries.

API Comparison

| Capability | AnythingLLM | Dify |

|---|---|---|

| Simple query API | Yes | Yes |

| Workflow triggering | No | Yes |

| Parameterized inputs | Limited | Strong |

| Automation integration | Basic | Advanced |

If you’re plugging into:

- Make.com

- n8n

- internal workflows

Dify integrates more naturally.

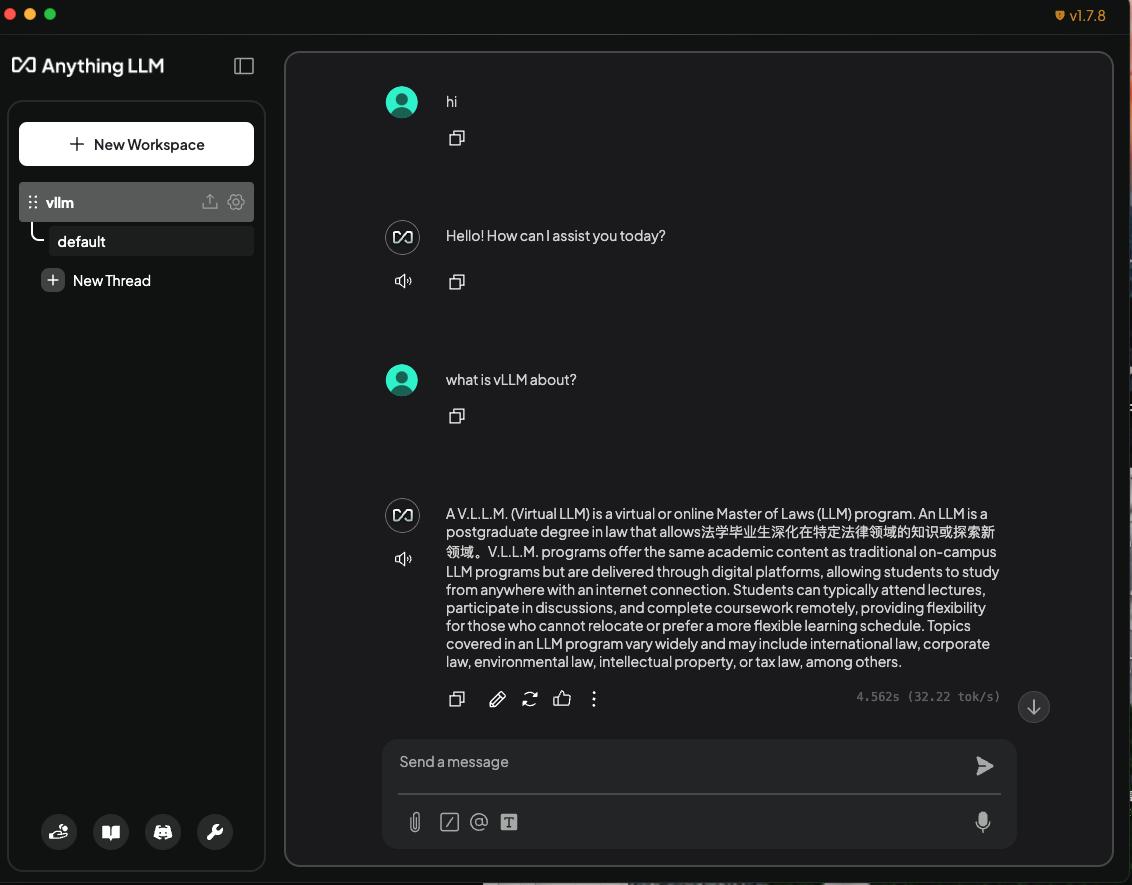

The Subtle but Critical Difference

AnythingLLM is:

“Ask questions about documents.”

Dify is:

“Build systems that use documents.”

That difference compounds over time.

When Each Tool Actually Makes Sense

| Situation | Choose |

|---|---|

| Quick internal RAG tool | AnythingLLM |

| Enterprise knowledge system | Dify |

| Minimal setup required | AnythingLLM |

| Complex workflows + automation | Dify |

| No identity system needed | AnythingLLM |

| SSO + role control required | Dify |

A Thought Worth Considering

Most teams start with AnythingLLM because it’s simple.

Many eventually move to something like Dify—not because they want to, but because they need:

- control

- structure

- integration

Final Thought

RAG platforms don’t fail because of models.

They fail because of:

- bad chunking

- weak access control

- poor integration design

So the real question isn’t:

“Which tool is better?”

It’s:

“Are you building a demo—or a system people will actually depend on?”